The Complexities of Mimicking Humans is Just the Beginning

Article 3 of our Cobot Series: Advances in sensor technologies have enabled the cobot to sense its environment, internal state, relative position, and more, yet the complexity of human-machine collaboration is a puzzle still unfolding.

Universal-robots

This is the third article of an eight-part series that explores how collaborative robots or cobots are transforming industrial workspaces. It will survey the technologies that have converged to make robotic influence possible, unpack the unique engineering challenges robotics pose, and describe the solutions that are leading the way to the influential robots of the future.

The articles were originally published in an e-magazine, and have been substantially edited by Wevolver to update them and make them available on the Wevolver platform. This series is sponsored by Mouser, an online distributor of electronic components. Through their sponsorship, Mouser Electronics supports spreading knowledge about collaborative robots.

Complex Sensing Requirements

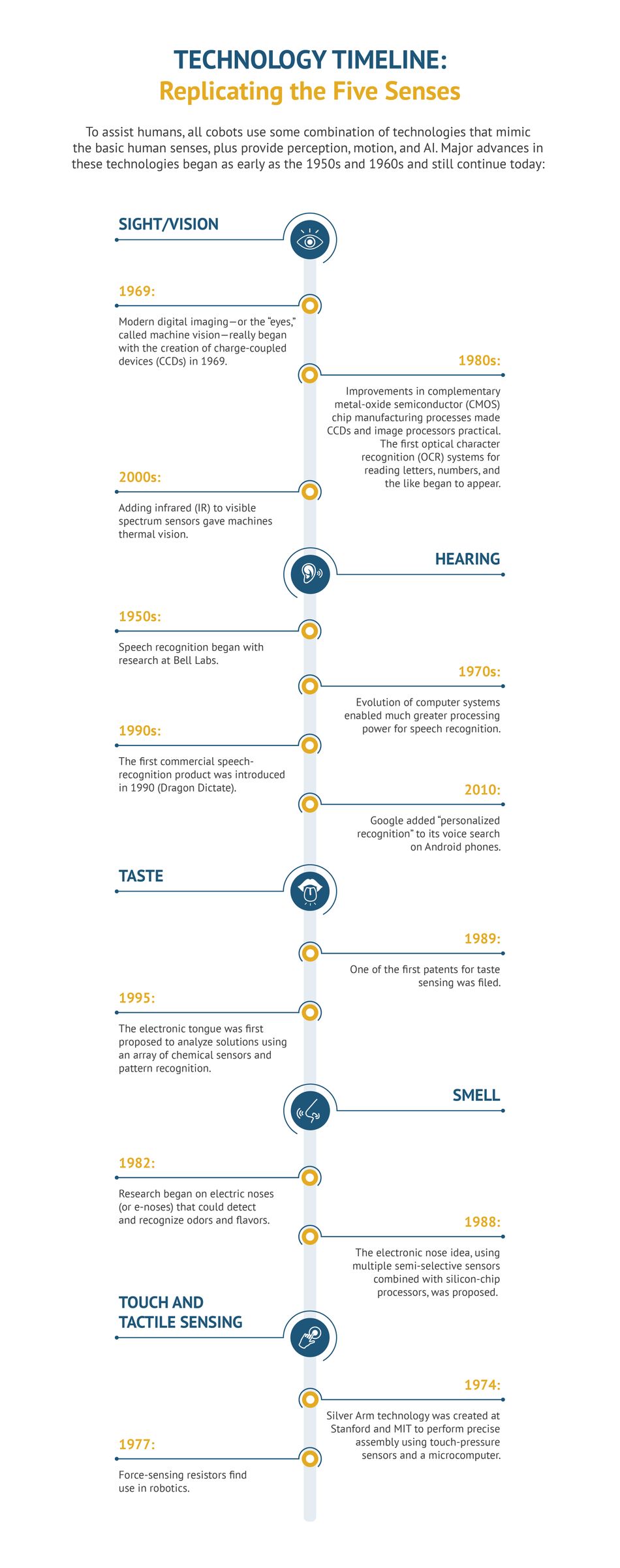

Developing collaborative robots requires creating many complex systems to sense, communicate, and move alongside humans safely and effectively. To assist humans, cobots use a combination of technologies that mimics the basic human senses of sight/vision, hearing, taste, and smell, to complete its tasks and understand its environment. Additionally, cobots must communicate and move, requiring yet another set of systems with which to talk, understand, and assist their human co-workers.

Sensing Their Environment: Exteroception

To be useful to humans, cobots must have a range of environmental sensors to perform their tasks and stay out of trouble. These sensors belong to the realm of exteroception—that is, sensitivity to stimuli outside of the body. Common exteroceptive sensors in cobots include vision, hearing, touch, smell, taste, temperature, acceleration, range finding, and more.

Sensing Their Internal State: Interoception

To be self-maintaining, robots must also be able to know their internal state. This corresponds to interoception in humans, or the ability to perceive innate statuses of the body like digestion, breathing, and fatigue. For example, a cobot must know when its batteries need charging and reactively go seek a charger. Another example is a cobot’s ability to sense heat when its internal thermal temperature is too high to work next to humans. Other interoception examples involve optical and haptic mechanisms, which we’ll cover later in this article.

Sensing Their Relative Position: Proprioception

Awareness of the external and internal is critical for the operation and maintenance of a cobot, but to be useful to humans most cobots must also have proprioception. It is proprioception that allows the human body to move and control limbs without looking at them, thanks to interactions and interpretations from the brain.

In humans, this results in an awareness of the relative position of human body parts and the strength and effort necessary for motion. Human proprioceptors consist of muscles, tendons, and joints. In cobots, the functions of proprioceptors are mimicked mostly by electromechanical actuators and motors. Proprioceptive measures consist of joint positions, joint velocities, and motor torques.

Communicating with Humans

Voice and motion are not senses but are necessary for humans and robots to communicate and perform tasks. Voice communication is needed by cobots to clarify what is heard and to alert humans to potential dangers. Speech synthesizing hardware and software are used to artificially reproduce human speech.

Today, artificial intelligence (AI) is beginning to enable actual conversations between humans and cobots. Robots can understand the nuances in human speech, such as chatting, half-phrases, laughter, and even when noncommittal responses like “uh huh” are uttered. Sharing resources, like conversational floors, is another concept that robots are learning. To prevent talking over one another, robots are taught that only one person can “seize the floor” and talk at a time.

Complexities of Collaboration

Humans use a combination of senses to move, operate, and communicate. One common example is body language through hand gestures that is accompanied by voice commands. For cobots, this type of collaboration requires vision for gesture recognition, speech recognition to perceive commands, and some level of AI to interpret the context of human communication.

Continuing this point is the example of combined sensory input through vision and haptic (or “touch”) feedback. Consider the real-world example of a surgeon running a simulation of an operation before the actual event. The simulation can create a virtual reality (VR) where the surgeon can see and test the operation procedure. However, he or she has no way to sense the feeling of the scalpel’s contact with human tissue. This is where haptic feedback would help because it mimics the sense of touch and force during a computer simulation.

How can a machine communicate through touch? The most common form of haptic feedback is accomplished using vibration, such as the feeling created by a shaking, but silent, mobile phone. In the case of the surgeon, a linear actuator might replace a vibration motor. As the surgeon puts pressure on the simulated scalpel, a linear actuator that moves up and down places greater pressure on a portion of his body via a headband. This pressure corresponds to the pressure on the simulated scalpel.

In the case of a cobot, a parallel example of haptic feedback is found in the cobot’s grippers (or hands). These grippers will often contain a wrist camera for recognition of a grasped object along with force-torque sensors that provide input for a sense of touch.

Most workers communicate and control cobots by using buttons, joysticks, keyboards, or digital interfaces However, just as they are for humans, speech and haptics can be effective communication mediums for cobots.

Haptics and eye movement are another way sensory combinations can improve interplay between humans and cobots. As humans point toward an object, they first look in the direction of the object. This anticipatory action can be picked up by a cobot’s vision to provide a tip-off regarding the intention of the human collaborator. Similarly, technology can help a cobot communicate its intentions to humans. Robots now have projectors that highlight target objects or routes that the cobot will take.

Summary

As with any emerging technology, there are still many challenges that face the world of collaborative robots as they work side-by-side with humans. Will humans sometimes find cobots more frustrating than useful?

Today, most robots have gotten pretty good at combining voice and visual recognition to assist humans. However, what is lacking is the cobot’s ability to understand context and respond to complex situations. AI will be essential to enable cobots to truly interact, anticipate, and communicate, especially when they need to hand-off certain complex tasks to humans. Among its other abilities, this level of AI will also go some way to build a type of trust between humans and cobots that will further increase the potential of their collaboration.

This article was originally written by John Blyler for Mouser and substantially edited by the Wevolver team. It's the third article of a series exploring collaborative robots.

Article One introduces collaborative robots.

Article Two describes the history of Industrial Robots.

Article Three gives an overview of collaborative robots sensor technologies.

Article Four examines the balance between cobot safety and productivity.

Article Five discusses the development of cobot applications in manufacturing.

Article Six explores the challenges of motion control of robotic arms.

Article Seven looks at the potential of AI in cobots.

Article Eight discusses the social impact of cobots.

About the sponsor: Mouser Electronics

Mouser Electronics is a worldwide leading authorized distributor of semiconductors and electronic components for over 800 industry-leading manufacturers. They specialise in the rapid introduction of new products and technologies for design engineers and buyers. Their extensive product offering includes semiconductors, interconnects, passives, and electromechanical components.