Build TinyML models using your mobile phone

You can now build tiny machine learning (TinyML) models that interpret sensor data in realtime, from detecting lions roaring to tracking sheep workouts.

We can now build tiny machine learning (TinyML) models that interpret sensor data in realtime, from detecting lions roaring to tracking sheep workouts. But to get started building these models you need a development board, a cross-compilation toolchain, and knowledge about embedded development. Can't we do better? We already have a very capable device with multiple high-quality sensors in our pockets, and it's the perfect device to build your first TinyML model.

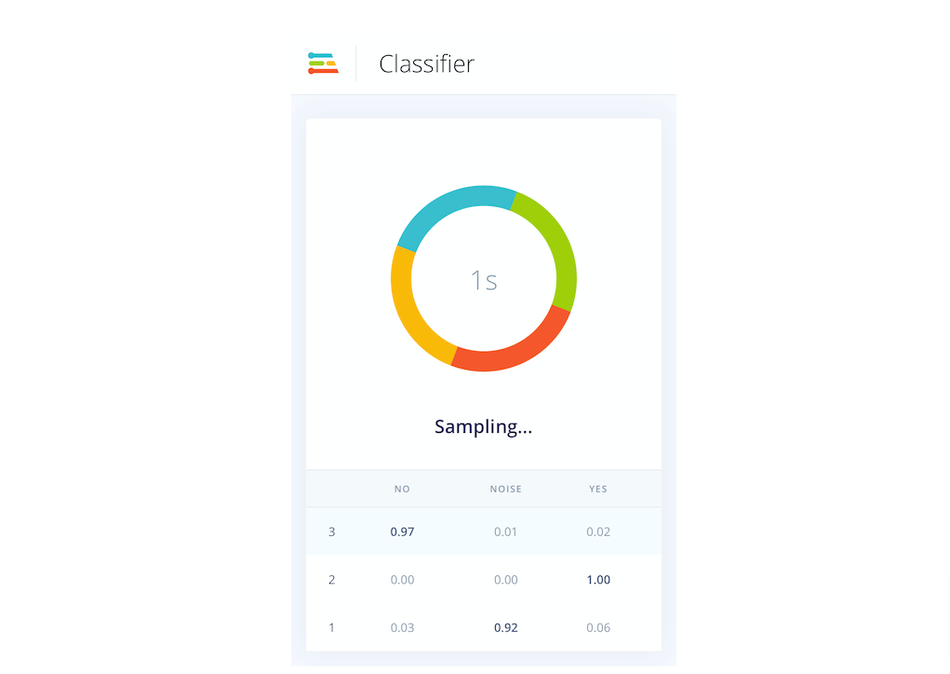

Edge Impulse is a lifecycle tool for TinyML. You connect a device (or a data store) to Edge Impulse, capture raw sensor data, train an ML model, and then generate optimized math that makes the model run on your device again. Usually, we use very constrained microcontrollers for all of this (think: 80MHz processor and 128K RAM), because the ultimate goal is to deploy the trained models back to battery-powered devices. But this is not a necessity: if a model works on a very constrained device, it will also work on a powerful device like your mobile phone.

Paired with the amazing power of the web - you can access sensors directly from JavaScript, and run C++ code through WebAssembly - we can replicate the full training experience on mobile phones without having to install an app. This does not just include data collection, we can now also run the compiled machine learning model on your phone, without the need for an internet connection.

To get started all you need is an Edge Impulse account and a modern smartphone. Then, head over to the Mobile phone tutorial to connect your phone to your Edge Impulse project, and grab your first data samples. Then, follow one of the tutorials to build a gesture recognition system using the accelerometer or to recognize sounds in your house using the microphone. Afterward, you'll have a trained machine learning model that works offline and can detect events on your phone in real-time. And best of all, the same model can be deployed on small embedded devices as well.

We hope this is a great way to introduce you to the wonderful world of TinyML, and can't wait to see what you'll build!