A framework for explaining the power of Intelligent Automation

Intelligent Automation (IA) is a set of technologies and methods for automating the work of white-collar professionals and knowledge workers. Here, we present a framework for explaining its power in terms of four main capabilities—Vision, Execution, Language, and Thinking & Learning—and how they can enable business transformations, with people and business goals at the center.

This article was first published on

www.wevolver.comThe content of this article is inspired by the Amazon bestseller book “Intelligent Automation.”

Intelligent Automation (IA), also known as hyperautomation, is a set of technologies and methods for automating the work of white-collar professionals and knowledge workers. Here, we present a framework for explaining its power in terms of four main capabilities—Vision, Execution, Language, and Thinking & Learning—and how they can enable business transformations, with people and business goals at the center.

Vision

Computer vision is an area of technology that’s progressing extremely rapidly, with new breakthroughs coming all the time. It is coupled with deep learning, allowing the computer to make sense of what it sees and make intelligent guesses about any missing visual information, as the human brain does for the eyes. In practice, large collections of digital images and videos are used to train machine learning algorithms, which enable computers to understand and automate tasks like the human visual system.

Applications of computer vision in a physical environment include recognizing objects (for example, in a robot navigating its physical environment) and interpreting signs and road markings (for example, in a self-driving car). However, it has even more applications in a digital environment.

Computer vision is used for intelligent character recognition (ICR), the more advanced descendant of optical character recognition (OCR). ICR can be used to digitize documents, such as invoices, contracts, or IDs, and extract and interpret the information in them.

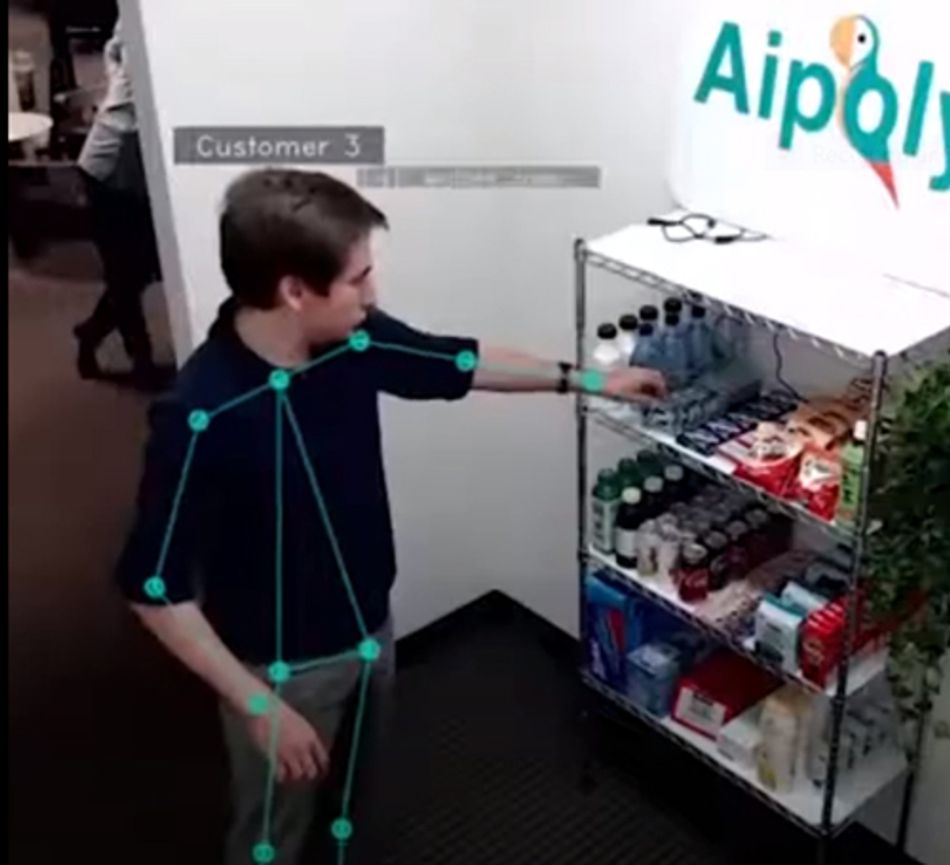

Computer vision can also automate the analysis of images and videos. This has a vast range of applications. It can automate medical diagnostics, improving outcomes and freeing up doctors’ time. It can provide retail store automation, such as Amazon Go, where cameras determine which items a customer has picked up and billing them accordingly, without the need for a human checkout assistant. It can be used in business process documentation, automating what is usually a lengthy and resource-intensive process by detecting the applications and objects that a computer user interacts with and then creating a flowchart of the process, complete with screenshots. Furthermore, computer vision can be used for biometrics, with applications in identification, access control, and surveillance.

A variety of machine learning models and algorithms are used to support the development and operation of computer vision applications. Nowadays, the most popular algorithms for computer vision tasks are based on deep neural networks and the deep learning paradigm. As a prominent example, Convolutional Neural Networks (CNNs) are widely used as data-driven feature extractors i.e., to identify and extract features from images and videos. As another example, Artificial Neural Networks (ANN) such as autoencoders are very commonly used in tasks like facial recognition and object detection. Autoencoders are very efficient when it comes to filtering, smoothening, and removing noise from images.

Execution

Execution involves actually doing things—accomplishing tasks in digital environments. This can include clicking on buttons, typing text, logging in and out of systems, preparing reports, and sending emails.

The execution capability acts as a glue to connect the other capabilities together in a streamlined way. For example, it can collect sales data using the Vision or Language capabilities, automatically convey the data to the Thinking & Learning capability for analysis, and compile and send out a report about the findings, with the help of the Language capability.

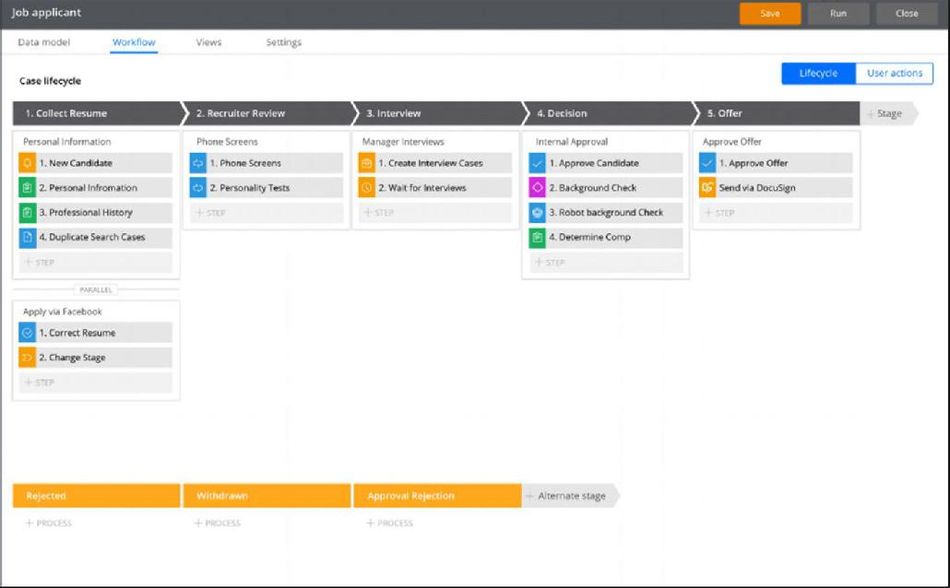

The key technologies supporting the Execution capability are smart workflow, low-code platforms, and robotic process automation (RPA). Smart workflow platforms help to automate predefined standard processes. (If existing processes have not yet been documented, this can be achieved using IA-powered business process documentation, as discussed in the previous section). Low-code platforms allow business users without coding skills to develop automated programs. RPA, which is the most powerful of these technologies, is used to automate any tasks that a human can do on a computer, such as opening applications, clicking menu items, entering text, or copying and pasting. It learns by recording the actions of the human user and then automates them to save time. RPA programs can be thought as configurable software robots that orchestrate many other enterprise applications in automated and intelligent ways. In this direction, RPA software interfaces to different enterprise systems (e.g., Enterprise Resource Planning (ERP) and CRM (Customer Relationship Management) systems) and databases via appropriate connectors. Leveraging these connectors, RPA streamlines and automates enterprise workflow. This obviates the need for repetitive, human-mediated, and error-prone processes such as manual switching of windows to copy information from one application and to paste it to another.

In their simplest form, RPA bots automate software processes based on a set of business rules. The latter provides a deterministic, rule-based orchestration of enterprise systems. Rule-based RPA is the first step to RPA adoption, which is quite straightforward to develop and deploy[1]. However, the future of RPA lies in the integration of statistical techniques to support intelligent workflows that continually learn and optimize themselves. In this direction, it is possible to collect data about the process in order to train deep neural networks (e.g., CNN) to optimize enterprise workflows[2]. RPA software will be soon capable of implementing self-configurable and self-optimized business processes leveraging data about the performance of the automated workflows.

Language

The Language capability enables machines to read, write, listen, speak, and interpret the meaning of natural human language. It’s used to extract useful information from unstructured documents, categorize text (for example, in spam filters), and perform sentiment analysis. It enables text-to-speech, speech-to-text, and predictive text keyboards. It’s also used to power chatbots, such as ANZ Bank’s Jamie, which is us to onboard new clients and guide them through the bank’s services, or Google Duplex, which can book restaurant tables and hair appointments over the phone; and machine translation, such as Google Translate, which is used by 500 million people each day to translate over 100 languages. Natural language processing (NLP) used to be coded as a set of rules, but nowadays it works using deep learning: reading large amounts of text and noticing correlations and patterns, very much like how humans learn and use languages. Modern businesses use a variety of NLP applications which range from the extraction of market sentiment for their products and services to chatbots and virtual assistants that boost the cost-effectiveness and the scalability of their front office.

In recent years there is also a surge of interest in NLP applications that can write entire articles and posts for blogging, content marketing, and copywriting purposes. Tools like Jarvis.AI, Copy.AI, Copyshark.AI, and GPT-3 produce very well-written essays in virtually any subject. In most cases, it is impossible to tell whether an essay was written by a human or an AI copywriting tool. Soon AI copyrighters will be also able to automatically draw visuals that will accompany their texts[1] leveraging approaches like X-LXMERT[2]. A comprehensive preview of such functionalities has been already provided in the scope of OpenAI’s DALL-E project[3] which creates images from text.

From an engineering perspective, NLP applications employ machine learning algorithms to automatically process textual inputs. A variety of classical machine learning techniques (e.g., Support Vector Machines and Bayesian Algorithms) are used to implement NLP systems. Nowadays, deep learning techniques are widely employed because of their effectiveness and accuracy in cases where large training corpora are available. For example, systems based on LSTM (Long Short Term Memory) techniques lead to effective NLP systems for speech recognition, text classification, and sentiment analysis. Nevertheless, most enterprise-class applications integrate and combine multiple machine learning models in ways that achieve high accuracy for the business problem at hand.

Thinking & Learning

The Thinking & Learning capability is about analyzing data, discovering insights, making predictions, and supporting decision-making. It can work autonomously, triggering automated process activities, or it can be used to augment human knowledge workers, providing them with insights to guide their decisions and actions.

The key technology behind this capability is machine learning—most of all, deep learning, which is the newest and most powerful component of machine learning. As already outlined, deep learning uses neural networks with multiple layers, each one processing and interpreting the data at a different level, inspired by how the human brain works. It learns autonomously from large amounts of training data, spotting patterns and correlations without being explicitly taught or programmed with any rules. Specifically, deep learning techniques outperform classical machine learning algorithms when faced with very large volumes of training data. Moreover, deep learning algorithms automatically select the features that are most important for the problem at hand, rather than expecting the data scientist to manually determine the features to be used. These are the reasons why deep learning techniques excel when faced with complex, unstructured data with numerous features. This makes them a primary choice for tasks like image classification, natural language processing, and speech recognition.

The Thinking & Learning capability also covers data management: acquiring, validating, cleaning and storing the data needed for machine learning, and providing data visualizations to help guide human decision-makers. These tasks are interrelated and typically executed in an iterative fashion. To perform and complete these activities, automation experts and data engineers preferably follow standards-based methodologies for data mining such as CRISP-DM (Cross Industry Standards Process for Data Mining)[1], KDD (Knowledge Discovery on Databases)[2] and SEMMA (Sample Explore Modify Model Access)[3].

The impact of IA capabilities on your business

Our aim is that this conceptual framework will equip you with the information you need for selecting vendors and choosing technologies for your IA journey, and fitting them into your existing organizational IT landscape.

Beyond the value that these four capabilities can deliver individually, though, the combination of them unlocks more impact than the sum of their parts. Combining the technologies broadens the scope of automation, from isolated tasks to continuous, automated, touchless end-to-end processes.

References

[1] B. Axmann and H. Harmoko, "Robotic Process Automation: An Overview and Comparison to Other Technology in Industry 4.0," 2020 10th International Conference on Advanced Computer Information Technologies (ACIT), 2020, pp. 559-562, doi: 10.1109/ACIT49673.2020.9208907.

[1] P. Martins, F. Sá, F. Morgado and C. Cunha, "Using machine learning for cognitive Robotic Process Automation (RPA)," 2020 15th Iberian Conference on Information Systems and Technologies (CISTI), 2020, pp. 1-6, doi: 10.23919/CISTI49556.2020.9140440.