How to Get the Most out of Topology Optimization

With the rise of Additive manufacturing, topology optimization has come to the forefront. But how can this tool be used to its fullest potential in engineering design? In this blog post, you will learn how to use next-gen design tools to enhance your workflows and processes.

This article was first published on

ntopology.comWith a name like nTopology, people often assume that we only do topology optimization. And while that isn’t quite true (have you seen our implicit modeling?), we are doing some incredible things to remove the hassle from topology optimization-driven workflows. While the engineering and mathematics behind this optimization process have been around for decades, with the rise of industrial additive manufacturing and the limitless complexity that it enables, we’ve seen exponential growth in the utilization of topology optimization.

But with that growth in usage comes an ever-increasing understanding of the frustrating limitations that occur when traditional design techniques are combined with topology optimization. However, next-generation design tools like nTopology can help ease away those limitations to enable engineers to create better-performing parts, faster.

What is Topology Optimization?

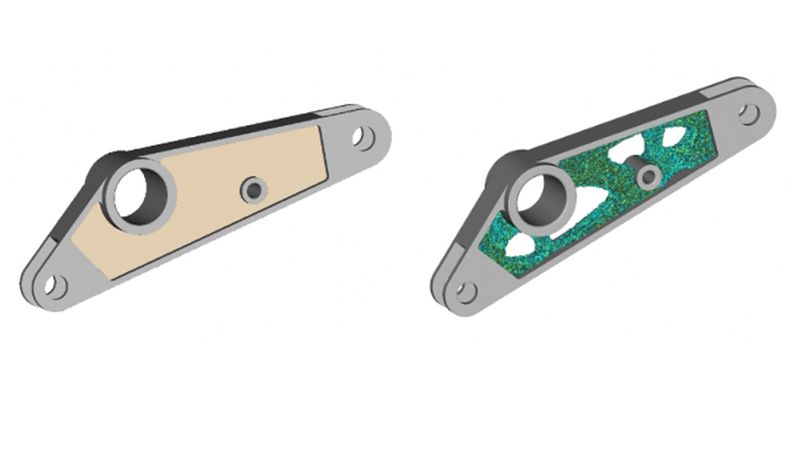

Topology optimization is a numerical optimization method that optimizes the placement of material inside a user-defined design space. By providing boundary conditions and constraints, objectives such as maximizing the stiffness of a component can be obtained. This method has seen a significant increase in adoption with the advances in computing power accessible to every engineer and the industrialization of additive manufacturing. In a general sense, topology optimization iterates through and removes the unnecessary material to identify the most optimal load path.

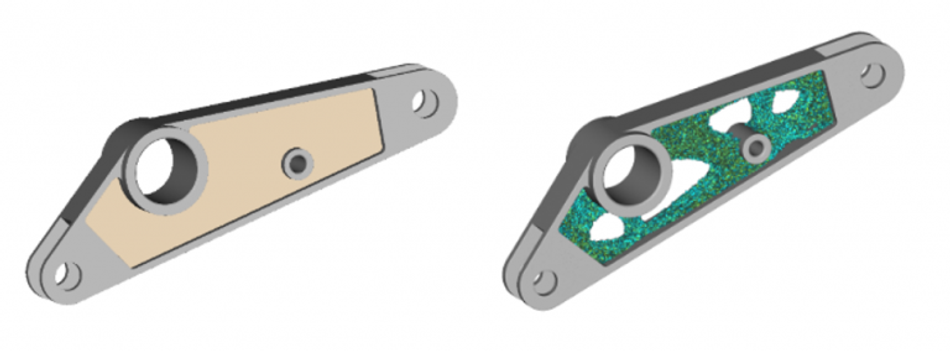

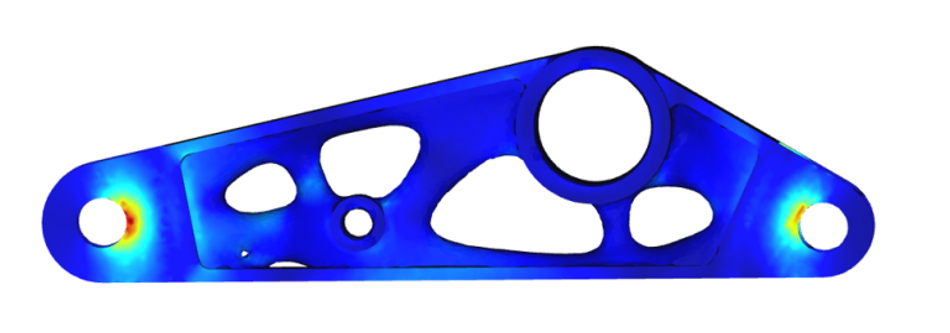

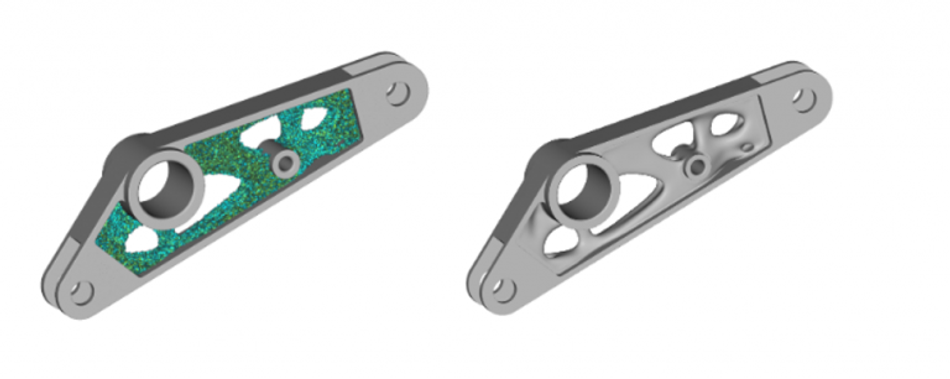

nTopology currently uses a density-based approach to topology optimization. After applying loads, constraints, and optimization objectives, one ends up with an element density field, which can vary between 0, indicating no material, and 1 indicating solid material. The user is then able to determine a threshold between those values to generate their component. While many tools, including nTopology, have stress constraints for topology optimization, there is still the need to validate the part afterward. Which may result in needing to change the density threshold.

Using topology optimization as a design tool often makes a lot of sense, as it is able to lightweight a design in a manner an engineer could never do manually, by precisely conserving materials along load paths. However, now that the design exploration phase is complete, many users will start to run into major pain points such as the output of the topology optimization process not immediately being able to be manufactured, validated, or incorporated back into existing design workflows. These problems all stem from the fact that the density field is applied to discrete elements and not to a continuous object, which results in a jagged and unappetizing triangle soup.

Several steps can be taken to push the result to a form that can be validated, manufactured, or incorporated into further design processes. With traditional design tools, the process to get there tends to be tedious and littered with limitations, which often falsely sets engineers’ expectations of what is possible with topology optimization.

How nTopology Solves These Pain Points

Traditional Geometry Reconstruction

With traditional tools, one often ends up applying mesh-based smoothing but ends up losing the functional faces that are so important for manufacturing and further design process. Or one ends up stuck with a problematic mesh that contains holes, overlapping triangles, and an array of other issues. Some traditional tools have an entirely manual process requiring users to tediously use sketch-based modeling tools to remodel the result of their topology optimization. While one can end up with some great geometry as a result of this manual process, if you adjust the threshold to add slightly more material, you end up redoing all of your modeling operations!

Next-Gen Geometry Reconstruction

In a robust modeling software like nTopology, you can quickly apply smoothing techniques to end up with clean, organic geometry, no more worrying about mesh problems or manual reconstruction here. Afterward, simply add back functional regions with volumetric boolean operations that never fail. Best of all, if you decide to update your density threshold all the operations will replay, no need to worry about manually redoing any work.

Traditional Post-Optimization Validation

When performing validation of a topology optimized part, users can be left with geometry that is missing the associativity to the applied loads and constraints from the optimization. This requires the user to go set up loads and boundary conditions or perhaps even find entirely new ways to apply them if it was done on a mesh basis. Which may not be easy to do, because there is no longer the same set of functional geometry.

Next-Gen Post-Optimization Validation

Loads and boundary conditions can effortlessly be carried over from topology optimization to be used for validation of resultant geometry inside nTopology. The same loads and constraints can even be used when sending geometry to 3rd party validation tools like Ansys Mechanical, Abaqus, Nastran, LS-DYNA, and more.

Traditional Path to Manufacturing

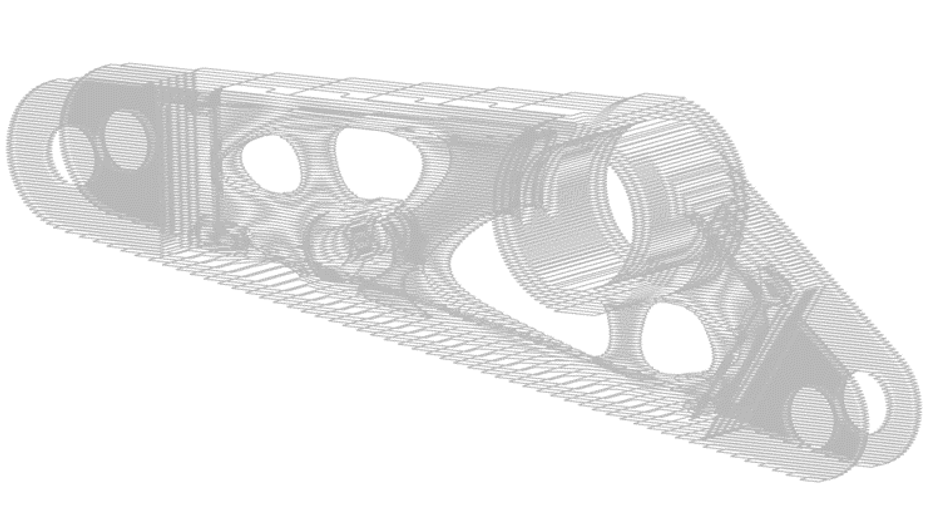

After painstakingly converting your topology optimization result into a surface mesh, you often spend additional time applying mesh repair to finally end up with data that are usable in your manufacturing process.

Next-Gen Path to Manufacturing

Users can directly slice (for select additive manufacturing machines) their finished functional part after automatically reconstructing geometry. Eliminating the inefficient step of generating a mesh which is then sliced in build prep software.

Want to Dig Deeper?

One of the more popular uses of topology optimization is reducing the mass of a part while ensuring structural integrity. If you’re curious how nTopology approaches a start-to-finish topology optimization process, then check out this how-to video. We’ll illustrate our approach, in addition to providing answers to some of those considerations and challenges — such as how our implicit modeling technology enables you to automatically reconstruct usable geometry directly from topology optimization results.

This article was first published on the nTopology blog.