Robotic simulator for dynamic facial expression of pain in response to palpations

Researchers modeled dynamic facial expressions of pain using a data-driven perception-based psychophysical method with visual-haptic interaction of users.

![Student receives real-time facial expressions from the robotic platform [Left– virtual]; Experiment setup [Right– actual]](https://image.wevolver.com/eyJidWNrZXQiOiJ3ZXZvbHZlci1wcm9qZWN0LWltYWdlcyIsImtleSI6IjAuZnN3ZGhlMWY2ZnJSb2JvdGljU2ltdWxhdG9yLnBuZyIsImVkaXRzIjp7InJlc2l6ZSI6eyJ3aWR0aCI6ODAwLCJoZWlnaHQiOjQ1MCwiZml0IjoiY292ZXIifX19)

Student receives real-time facial expressions from the robotic platform [Left– virtual]; Experiment setup [Right– actual]

Virtual human experience has been on the rise to train real-human interpersonal scenarios for doctor-patient interactions. Visual feedback on involuntary pain facial expressions in response to physical inputs is an essential source of information for skilled physicians and medical students. Standardized patients have the highest level of fidelity for simulating patient behavior to enable self-learning and improve training efficiency. But this methodology is time-consuming and requires diverse patient identities in terms of ethnicity and gender to achieve cultural competence. Even though robotic simulators offer lower fidelity than standardized patients, they can simulate a greater variety of medical conditions and respond to several physical stimuli such as movement, haptic, visual, and auditory feedback.

Some commercially available medical robotic simulators, such as Pediatric HAL, are currently the most advanced pediatric patient simulators capable of simulating emotions through dynamic facial expression, movement, and speech. They offer gender and skin color variations, but the options are limited and cannot be easily changed in the training session. Other virtual simulations, such as Virtual Patients, offer different demographics and medical history with clinical scenarios but are often only verbal or dialogue-based. Combining these features with virtual and physical robotic simulators will enhance the training experience for clinicians.

A team of researchers affiliated with Dyson School of Design Engineering, Imperial College London, and the School of Psychology and Neuroscience, University of Glasgow, have collaborated to model dynamic pain facial expressions using a data-driven perception-based psychophysical method combined with visual-haptic inputs [1].

Robotic system for simulating dynamic facial expressions

In 2021, the team developed a robotic face MorphFace [2], a 3D physical-virtual hybrid face to represent the pain expressions of patients, based on the MakeHuman and FACSHuman systems that can simulate six human avatars. The system can be integrated with capturing devices such as force sensors for real-time facial expressions. The modifications to the existing system to address the limitation of pain facial expressions from the various demographic backgrounds are presented in the paper titled “Simulating dynamic facial expressions of pain from visuo‑haptic interactions with a robotic patient.”

“We refine the paradigm by asking participants to perform actions (abdominal palpation) that would directly affect the exhibition of such facial expressions, thus enabling the modeling of facial expressions of pain exhibited within a given medical context and specific user interactions: pain received by the patient and subsequently the facial expressions of pain arise from palpation of the abdomen performed by the participant,” the team explains. “Thus, we anticipate that our system and procedure can be used to create dynamic pain facial expression simulation models of interactive physical examination procedures for diverse patient identities, ultimately providing bespoke medical training solutions to reduce erroneous judgments in individual medical students due to perceptual biases.”

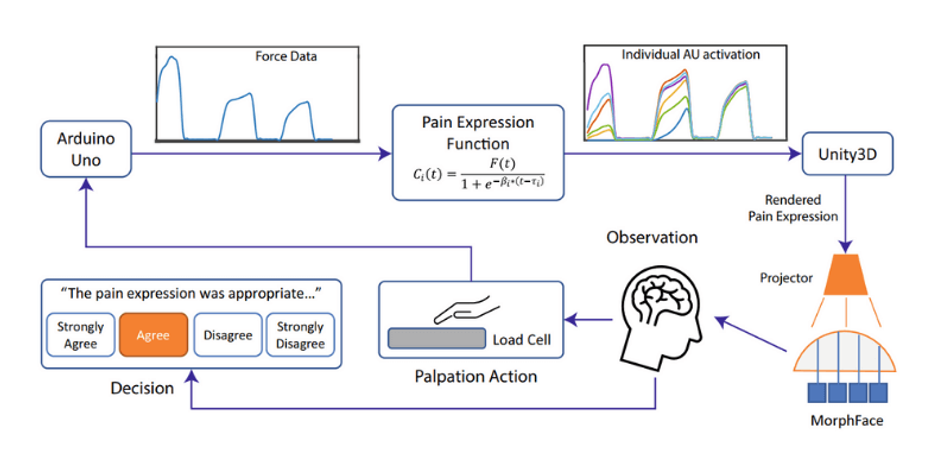

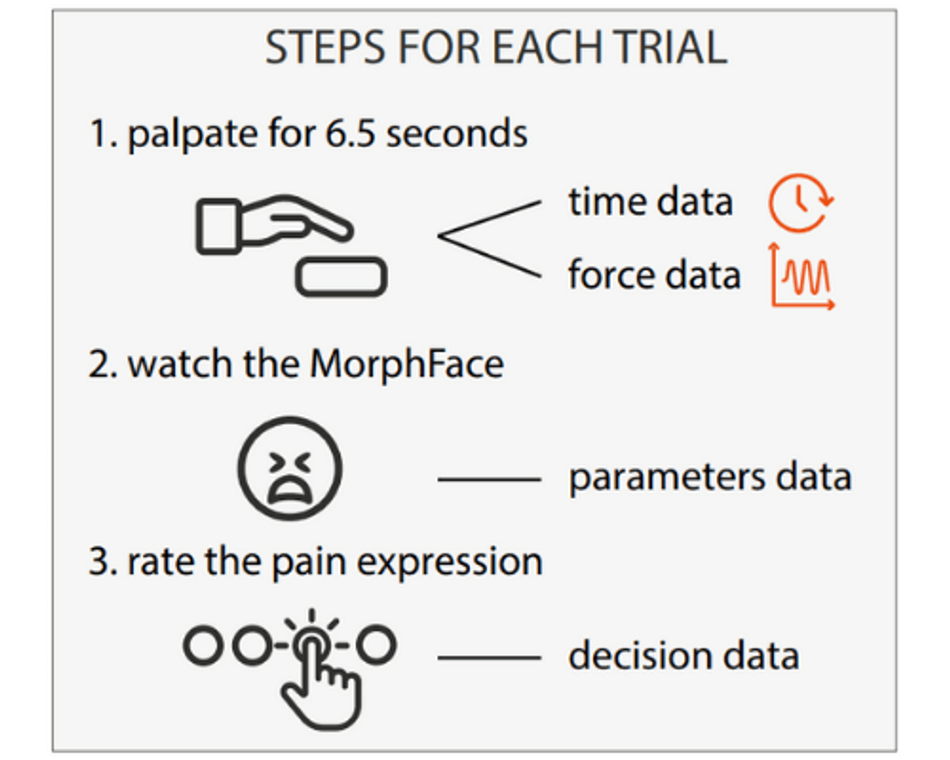

The participants performed palpation action on a block of silicon abdomen phantom placed on a load cell. The applied force is then captured by the load cell and sent to Unity3D through an embedded device, Arduino Uno, at a baud rate of 9600. The pain expression generation function is implemented in C# language the describes the relation between palpation force and the action unit (AU) activity intensity. The force triggered a real-time display of six pain expressions on the robotic face, MorphFace, controlled by the rate of change β and activation delay τ. Each participant then rated the appropriateness of the facial expression on a 4-point scale from “strongly disagree” to “strongly agree” within a time limit of 6.5 seconds.

What do the results have to say?

The results showed that all the participants comprised a higher rate of change and shorter delay for upper face AUs than those in the lower face. The results suggest that gender and ethnicity biases affect palpation strategies and the perception of pain-related expressions displayed on MorphFace. “We anticipate that our approach will be used to generate physical examination models with diverse patient demographics to provide focused training and address errors,” the team concludes.” These findings highlight the usefulness of visual-haptic interactions with a robotic patient as a method to quantify differences in behavioral variables relating to a medical examination with diverse participant groups and to assess the efficacy of future bespoke interventions aimed at mitigating the effects of such differences.”

The research paper is published under Scientific Reports in open-access terms. The datasets for the research paper are published for public viewing in the OSF repository.

References

[1] Tan, Y., Rérolle, S., Lalitharatne, T.D. et al. Simulating dynamic facial expressions of pain from visuo-haptic interactions with a robotic patient. Sci Rep 12, 4200 (2022). https://doi.org/10.1038/s41598-022-08115-1

[2] T. D. Lalitharatne et al., "MorphFace: A Hybrid Morphable Face for a Robopatient," in IEEE Robotics and Automation Letters, vol. 6, no. 2, pp. 643-650, April 2021, DOI: 10.1109/LRA.2020.3048670.