Remote collaboration and real-time animation

With Xsens Motion Capture

RIFT is a feature-length animated film shot entirely in real-time using Unreal Engine and the inertial motion capture of the Xsens MVN platform.

Epic Games’ Unreal Engine software may be best known for powering smash hit games like Fortnite, but the platform is also being more widely adopted across industries, including film, television, architecture, and more. One such adopter, Hasraf “HaZ” Dulull of HaZ Film, is currently in production on RIFT, a feature-length animated film created entirely in real-time with Unreal Engine and the inertial motion capture capabilities of Xsens.

With Xsens and the Live Link plug-in for Unreal Engine, filmmakers are able to see mocap results in real-time within the movie’s world using actual character assets. And with the Xsens Motioncloud platform, the London-based Dulull can easily collaborate with mocap specialist Gabriella Krousaniotakis in Florida as they share data and process it in the cloud.

Real-time revolution

Due for release at the end of 2021, RIFT is a feature-length film being created entirely within a real-time game engine. Unlike traditional CG animated films, which require vast teams of animators and must pour ample resources into rendering footage, HaZ Film is producing the movie within the world they’ve created in Unreal Engine. Thanks to Xsens, they can do so with a small, nimble team that’s able to continually refine the animation throughout the production.

“There’s a huge pipeline that we’ve condensed into real-time. You’re not waiting hours or days for renders to be completed. We’re not a huge, massive team,” says Dulull. “This is a filmmaking revolution, and we can legitimately make a feature animated film with Unreal Engine.”

As part of the production process, Haz and his team are constantly working towards very strict deadlines and are building the feature-length sequences at a rapid pace. A mocap solution was vital to their multi-location workflow and needed to allow for the capture of necessary motion data both quickly and accurately. From his previous experience with Xsens, our MVN motion capture suits were a first choice for the production.

Rapid mocap with Xsens

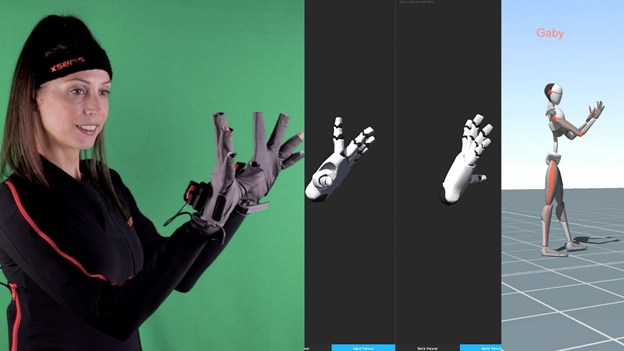

Leading the motion capture and virtual production pipeline R&D for HaZ Film is Gabriella Krousaniotakis, who is entirely self-taught, quickly picking up how to capture and transport the data for instant use in Unreal Engine. For RIFT, Gabriella uses the Xsens MVN suit to precisely capture character animations, all the way down to the intricate hand positioning and finger movements, thanks to Xsens Gloves by Manus, using toy guns as stand-ins for weapons that will be created digitally in the next layer of the process.

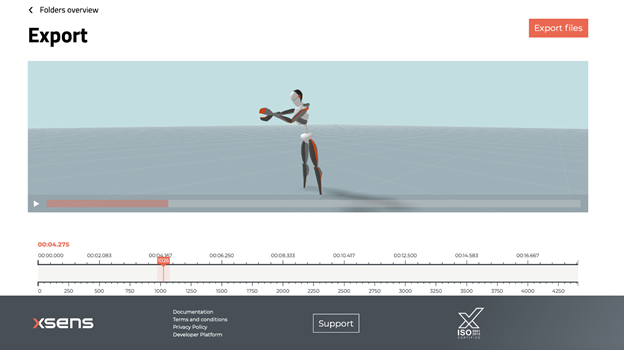

Once captured, everything is ported into Unreal and sent over to Haz in London to review the next day as part of their efficient around the clock workflow. Not only are the project’s key collaborators nearly half a world away from each other, but they often move around or travel for work. This rapid prototyping and turnaround are aided by Xsens MotionCloud, the cloud-based data transfer and processing platform we released earlier in 2021. MotionCloud makes it easy for them to send data back and forth and access it from anywhere, removing any barriers that might have impeded this kind of remote working model in the past.

“MotionCloud is awesome because we can access the Xsens data anywhere in the world and we don’t need to spend hours processing data – we get to see things in real-time which is key.”

Always moving forward

HaZ Film continues pushing ahead with this inventive production model, and the ability to work with accurate motion data in a real-time creation engine means that the film is continually being improved with every little tweak and enhancement. Nothing is wasted on this indie production: they can move fast, break barriers, and constantly refine along the way.

“The Xsens data is close to perfect, and we’re always looking to move as fast as possible to the next step,” says Dulull. “As artists, we’re always trying to get that final 10% that takes a film from ‘cool’ to ‘friggin’ awesome,’” says Dulull. “We can do that because of the real-time pipeline, the speed of Xsens motion capture, and the accuracy of the Manus gloves.”