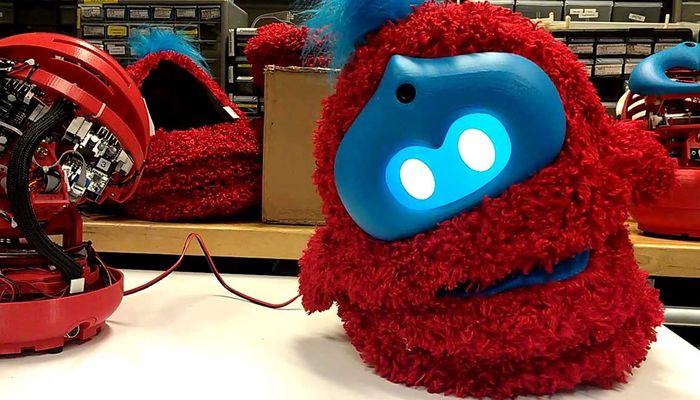

Tega Robot

Tega is a robot platform designed to support long-term, in-home interactions with children, with applications in early-literacy education from vocabulary to storytelling. Tega is a research platform designed to support long-term interactions with young children. The robot leverages smart-phone technology to not only graphically display facial expressions but also for computation, which includes behavioral control, sensor processing, and motor control to drive its five degrees of freedom.

Technical Specifications

| Length | 17.78 |

| Width | 17.78 |

| Height | 34.54 |

| Weight | 6.168856 |

| Degrees of freedom | 5 |

| Battery life | 6 |

| Sensors | |

Overview

Tega is a research platform designed to support long-term interactions with young children. The robot leverages smart-phone technology to not only graphically display facial expressions but also for computation, which includes behavioral control, sensor processing, and motor control to drive its five degrees of freedom: head up/down, body-tilt left/right, body-lean forward/back, body-extend up/down, and body-rotate left/right.

For increased perceptual awareness, the phone’s ability was augmented with an external camera that can capture high-definition images with a wider field-of-view.

To withstand long-term continual use, the efficient battery-powered system can last up to six hours before requiring charge. The robot is designed for robust and reliable actuator movements, including the ability to expand and contract rapidly through a lead-screw design between the torso and the head. This enables the robot to generate consistent and expressive behaviors over longer periods of time.

The robot can run autonomously or can be remote-operated by a person through a teleoperation interface. A variety of facial expressions and body motions can be enabled on the robot, such as laughter, excitement, and frustration. Additional animations can be developed on a computer model of the robot and exported via a software pipeline to a set of motor commands that canbe executed on the physical robot, enabling rapid development of new expressive behaviors. Speech can be played back from pre-recorded audio tracks, generated on the fly with a text-to-speechsystem, or streamed to the robot via a real-time voice streaming andpitch-shifting interface.

References

A short paper which describes the robot and its features.