Enhancing Emergency Responders Situational Awareness Using XR Tools

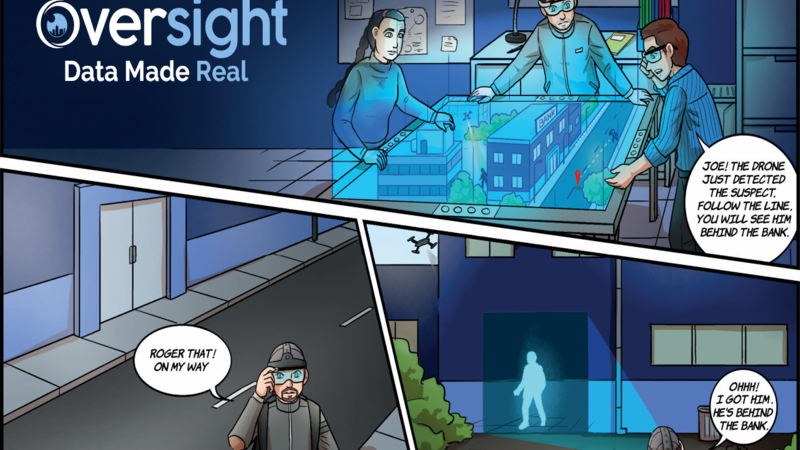

Using AR/VR technology, Oversight gives emergency responders the ability to literally SEE THROUGH WALLS and strengthens the connectivity between control rooms and field operators.

On the 24th May 2022 in Uvalde, Texas, a gunman killed 19 children and two adults at an elementary school. This is part of the events timeline, focused on the police functioning during this event:

- 11:28 a.m. - Shooter arrives at school

- 11:30 a.m. - A teacher calls 911

- 11:35 a.m. - “…Pete Arredondo, the chief of the school district’s police department, arrives at the scene around this time. He does not use his radio. )Arredondo wanted both hands on his gun if he encountered the shooter and believed the radio would have slowed him down, his attorney said.”(

- 12:03 p.m. - A student calls 911 from room 112 for a minute and 23 seconds and identifies herself in a whisper. Meanwhile, as many as 19 officers are positioned in the school’s hallway.

- 12:46 p.m. - Breach command is given. Arredondo gives his approval to enter the classroom. “If y’all are ready to do it, you do it,” he says, according to a transcript of police body-camera footage.

- 12:50 p.m. - Forces kill gunman

It took one hour and twenty minutes from the first 911 call until the gunman was neutralized!

The problem

Emergency responders all over the world need an immediate understanding of their surroundings. This critical information makes the difference between lives saved and unfortunately, horrible disasters.

All forces involved share the same desire for precise information ASAP in a clear and visual way that will enable them to make smart decisions in real-time.

From investigating the horrible attack in Texas, we can specify a few points that were handled poorly:

Non-unified data management in control rooms led to inefficient management of the forces.

Control rooms and dispatches are constructed of multiple sub-systems and multiple screens. These subsystems rely on:

- Extensive operator training

- The misassumption that operators are not suffering from cognitive load

- The ability to communicate with each other simultaneously

These factors can cause fragmented data, which can easily lead to the loss of important information and in some cases can cost human lives.

In a six-year period, the amount of IoT devices in the world is expected to double, which will make the cognitive load even more challenging and almost impossible to process without data fusion systems.

Unclear visual picture and lack of critical elements geolocation in the school.

Emergency responders that arrived at the scene were missing relevant visual information and understanding of the current status at the elementary school - in particular, regarding the attacker’s and civilians' locations.

Operators’ limitation to free his hands for radio & tech devices (tablets, etc.).

A major fact is that Chief Arredondo was only focused on his gun, claiming to be unable to free his hands for the radio, even at the price of being cut off from communication with others.

The flow of information from control rooms to the field.

It is clear from this timeline that the information was available to the control room streaming constantly to the 911 dispatch via oral information.

Moreover, the ability to exploit accessible data such as smartphone devices of the students or even the gunman was possible, yet, unfortunately, unavailable to the emergency responders as a result of poor distribution to the field.

The Solution

The ultimate solution for this type of scenario will involve taking all the data that was streamed in real-time to the 911 dispatch, combined with any other accessible data such as the students’ smartphone information, static cameras deployed in the school and other friendly forces’ locations that were on-site in order to bring to any relevant emergency responder a comprehensive image of what's going on in a way that will improve efficiency and drastically increase situational awareness.

Oversight develops Absorber - A visual, 3D, Command-and-Control (C&C) solution based on augmented and virtual reality tools enabling information sharing and management on top of Geographic Information System (GIS) between many users simultaneously. The system does not require a physical location to run in and can be managed remotely.

Complete data fusion - one platform for all information stitched together in real-time

This product has a novel unified and distributed data management system. All devices that are map-locatable and can produce valuable data to the system, (hereinafter, data collection agents) - Drones and other unmanned aerial vehicles, robots, IoT sensors, smartphones, XR headsets (extended reality), PTZ cameras or other stationery cameras and other sources that produce localization information or other visual information.

XR-based display overlaid on reality image

Another breakthrough is connecting information to the actual world by displaying data collection agents in real-time on the user reality image. Seeing things without any physical limitations will open a whole new world for managing data.

Easy to operate

Controlling information on such a system is done using the operator’s hands, head and eye gestures in a common and natural way. This will reduce the number of operators and the level of training required. Logics can be in-built in order to eliminate the operator needing to deal with other devices. For example, he will be able to talk to a partner only by focusing on them with his eyes.

Decentralized multiple-player system

This method for controlling agents is especially suitable for a rear control room operation while the user in the field can also participate by managing data. All data can be shared on one system, making the system mobile and not so dependent on hardware-based devices.

Bottom line

Absorber is a breakthrough all-in-one system that can independently operate throughout a developing situation and communicate information in real-time. It enables an immediate flow of information and smart decision making

Absorber and Its Components

The different data collection agents Absorber will support have the common ability to continuously convey their current location. This is a basic requirement of the system to allow accurate determining of each agent’s location, their spatial relation to others and displaying them on a hologram-based 3D digital table-top view board using XR headsets or smartphones.

Absorber will serve as an add-on or replacement for agent management and can enhance operators’ situational awareness in real-time.

Collectors

Absorber consists of “collectors” - software utilities for connecting devices to the system. Collectors convert the protocol of the connected device into Absorber’s proprietary protocol - that’s what makes the system unified, since each device can have different operating systems, SDK, units of measurement or even global datum reference.

XR devices serve as the system display and also act as data collection agents since most XR devices can report their relative location, produce a real-time map and report device status. By connecting them to a GPS device they can also be a map-locatable device.

The very use of immersive technology and holograms to represent information will make it possible to build a multi-user system that can share information even from remote locations.

Core

Absorber’s core components are media server for managing videos from multiple devices, map server in order to store relevant geocell information for a comprehensive on-premises solution and an interactive simulation engine in order to create events and scenarios for an offline training solution. Data fusion logics for managing multiple agents will be built on top of that collaborative information.

Users and SDK

On the other side, we have the end-users who can be control room operators or even emergency responders. The system will also deliver SDK to let other users build solutions on top of this collaborative information.