A review of autonomous vehicle safety and regulations

How safe is this rapidly spreading technology?

A self-driving car of Uber involved in a crash

This article is part of Wevolver's research for the 2020 Autonomous Vehicle Technology Report and focuses on the American perspective for reasons of data: both the largest number of driverless cars, operators, and reports are in the United States. It is also a useful case study as a car-centric culture. Major safety issues not discussed for brevity include hacking vehicles[1], liability[2], human interactions with autonomous vehicles[3,4], and infrastructure[5]. Reference material on these topics is available as cited.

Safety Third

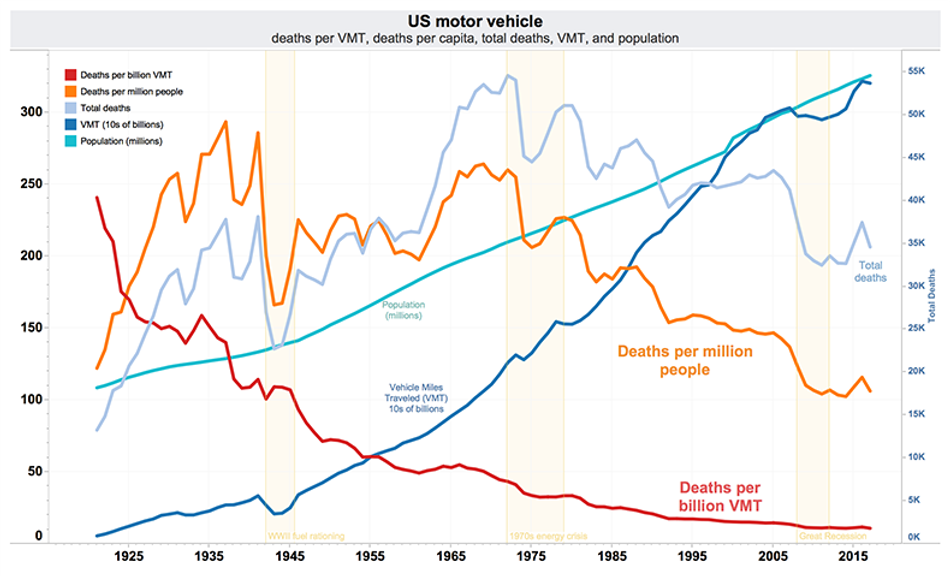

This graph shows how far we have come in terms of road safety in the U.S. from the days when unlicensed 14-year-olds were legally driving for hire in cities with no lanes or traffic lights, to the modern safety standards enjoyed today[7]. Many think this graph still has room to inverse thanks to so-called “driverless” cars, because 94% of road accidents are caused by driver error[6]. The nearly forty thousand dying in road accidents every year in the U.S. alone[8] feel as unnecessary as those before seatbelts or airbags became mandatory.

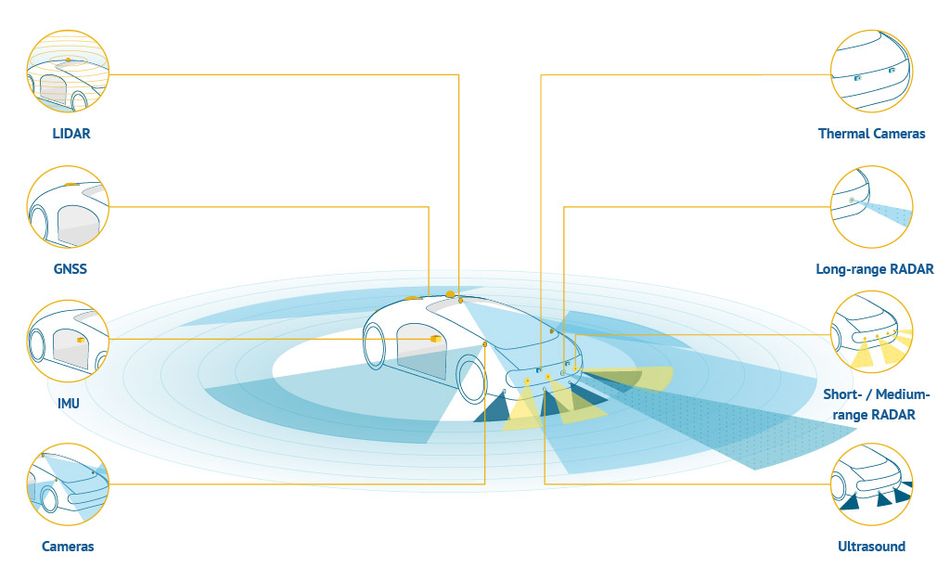

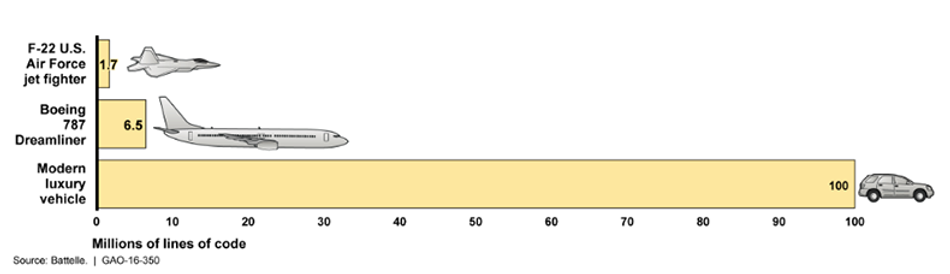

The first person killed by a driverless car on a public road died in Arizona in March 2018[9], but driverless cars are operating nearby today[10]. How safe is this rapidly spreading technology? We say “self-driving technology,” but what we are actually referring to is a collection of technologies thrown together to deliver a car capable of driving itself, as seen in the graph below[11]. What this graph is missing however, is the software, which can be very complicated, as illustrated by the small bar graph below that[1].

Software, or how a machine thinks, is arguably the biggest challenge. Comparing the code in a 2016 luxury vehicle to the machine learning behind today’s autonomous vehicles[12] is unfair, since they work a little differently. The push to develop artificial intelligence has been driven at least in part by how much more efficient it is for coding behavior at large scale. Previously, there had to be a specific line or lines of code for every possible interaction, and the machine just would not work when it encountered something unexpected–until someone could patch the software with more lines of code to account for the conditions.

By contrast, machine learning gives–after a good deal of training–digital logic to learn from information and decide what to do without a specific instruction for each specific situation that could ever happen. That does require a lot more computing power, observational data, and mathematically refined models to pull off, so other metrics show us just how much more is required to run these new autonomous road vehicle operating systems compared to the Advanced Driver Assistance Systems in luxury vehicles of the last few years. Both rely on data from sensors to tell you if you are getting too close to an object, but only autonomous driving systems are analyzing and computing data from various sensors and maps to the degree they’re be able to control the vehicle completely without supervision.

That is a lot more complexity than we humans are used to designing into our vehicles. And yes, while several sensing systems have difficulty in various conditions, and localization accuracy does have room for improvement, as does handling of mapping data[13], the driverless car that killed a pedestrian in 2018 saw her long enough to reclassify her three times before hitting her[9].

We would be forgiven for thinking an autonomous vehicle should have thought to stop before hitting a person. Current software systems do have trouble identifying cues in our communication like gestures and eye movement[5]. They are best adapted to structured systems, but unstructured events on the road, like an ambulance speeding against traffic or malfunctioning signal, can be difficult for current systems to understand quickly.

While all this is true, that car that killed a pedestrian in 2018 had safety functions like its emergency braking system disabled, possibly so it could act more like a human driver, without sudden, erratic stops[3]. It could be argued that the system was handicapped before failure, by people. That’s exactly what the National Transportation Safety Board (NTSB), which analyzes transportation accidents to inform safer transportation practices, says in its preliminary report[14] and hearings on this accident[15]. The NTSB found fault in nearly every human involved. From Arizona highway policy, to the human failsafe in the car distracted by their phone, to the pedestrian with methamphetamines in their system walking outside the crosswalk, not to mention a slew of entirely factual criticism for Uber, which was found to be at fault, but not prosecuted in court:

Uber did not have a formal safety plan in place at the time of the crash.

The board also found that Uber’s autonomous vehicles were not properly programmed to react to pedestrians crossing the street outside of designated crosswalks. Moreover, Uber revealed to the board that its self-driving test vehicles had been involved in over three dozen crashes prior to the fatal one in Tempe[17].

Improving road safety to where we are today took decades, but it looks like we are moving a lot faster than we used to. Legislators in 36 States in the U.S.[17] and Congress have already enacted laws on driverless cars, and the U.S. Department of Transportation’s Comprehensive Management Plan for Automated Vehicle Initiatives[19] includes over $120 million in targeted research and technology development funding, informed by frequent engagement with stakeholders outside the public sector.

Companies have banded together too, as in the Automated Vehicle Safety Consortium[20], to jointly develop safety best practices at each level of autonomy. Its first set of recommendations–perhaps unsurprisingly given its impressively broad yet entirely corporate makeup, including Uber–focuses mostly on “fixing” the failsafe drivers and the general public, but more will be tackled in time.

Even the United Nations has released a "preliminary framework” for the globalization of this technology[21]. Although it is just a series of topics and working principles at this point, half of them on aspects of safety, it has been criticized for missing a few important elements of this technology’s global proliferation, and loopholes in its definition of safety sound like it might let autonomous vehicles “get away” with anything short of bodily harm. This will likely be revised as more countries become concerned about self-driving cars in their neighborhoods.

At the working level, real progress is being made through industry collaboration, like the “Safety First for Automated Driving” report[22]. The 150+ pages authored by eleven companies, like Intel and BMW, begins with the world’s laws and regulations, moves on to verification and validation of component systems, cybersecurity, and a breakdown of all the different elements and steps, physical and digital, as well as case studies on how to make a car that drives itself. It is entirely non-binding, and the authors freely admit how much they simply do not know yet, especially about the challenging problems that they have just not figured out yet.

Big, car-centric cities in the United States are thinking about this, too. Los Angeles’s Department of Transportation’s Strategic Implementation Plan[23], for example, devotes much of itself to the integration of autonomous vehicles via strict control, including APIs meant to be enforced on all autonomous vehicles operating in Los Angeles, whether ground or aerial. Ambitious as this is, while we are much better than we once were at adapting to technological change, technology is probably advancing more rapidly than our conceptual understanding of what we do with it.

Google’s Waymo, which is already selling rides in its autonomous taxis inside a geofenced area in Phoenix, AZ, USA, uses “pathological situations,” like people jumping out of bags dressed as Elmo in front of the car, to prepare its systems for the unexpected[24]. Today, there are not only driverless rides for sale in Arizona, but startups filling niche markets and generating revenue.

Markets and morality are already converging. It seems now only a matter of time, as these technologies continue to improve and as regulators keep pace, before driverless cars can operate in increasingly complex scenarios compared to the well-ordered environment of newer cities in the American Southwest.

The speed of the development and deployment of this technology cannot be understated. The Defense Advanced Research Projects Agency (DARPA) held an Autonomous Vehicle Grand Challenge in 2004. “Every vehicle in that first Grand Challenge in 2004 crashed, failed, or caught fire[25]” and 15 years later there are autonomous taxis for hire.

The race to autonomous platforms is happening with every other kind of vehicle, too. These autonomous cars, trucks, boats, planes, drones, etc. will interact with each other and humans. This only amplifies our already heightened need for trust in vehicles that do not need a human to go from point A to point B. The term “Trusted Autonomy” was first coined to describe how humans interact with each other. It now extends to machines and digital systems[26].

Few understand the connection between the technology that lets a car drive itself and the technology that lets planes, ships, and spacecraft ‘drive’ themselves better than the military, because they work in each of these domains every day. The Defense Advanced Research Projects Agency (DARPA) has been running an Assured Autonomy program to develop trusted autonomy through the design, development, and deployment of land, sea, air, and space systems[27]. DARPA is investigating tools like mathematical models that quantify risk and uncertainty in algorithms during development, and safety kernel methods to provide assurance in operations. The same autonomy technology story playing out in the automobile sector should be expected in every other.

Or does this technology, as it spreads across far more than transportation, open up possibilities for new ways to live? There may be more than one answer as we choose–or perhaps allow–this suite of technologies we call “autonomy,” its users, and applications to be controlled. Making autonomous road vehicles more trustworthy seems only a matter of time; how much we trust each other,

a matter of choice

Continue learning? Complement this article with our 2020 Autonomous Vehicle Technology Report, to which Jordan Sotudeh has been an important contributor.

References

- Office USGA. Vehicle Cybersecurity: DOT and Industry Have Efforts Under Way, but DOT Needs to Define Its Role in Responding to a Real-world Attack [Internet].

U.S. Government Accountability Office (U.S. GAO). 2016 [cited 2019Nov23].

Available from: https://www.gao.gov/products/GAO-16-350 - Bartolini C, Tettamanti T, Varga I.

Critical features of autonomous road transport from the perspective of technological regulation and law. Transportation Research Procedia [Internet].

2017 [cited 2020Jan16];27:791–8.

Available from: https://www.sciencedirect.com/science/article/pii/S2352146517308992 - Stewart J.

Humans Just Can't Stop Rear-Ending Self-Driving Cars-Let's Figure Out Why [Internet].

Wired. Conde Nast; 2018 [cited 2019Nov21]. Available from: https://www.wired.com/story/self-driving-car-crashes-rear-endings-why-charts-statistics/ - Szymkowski S.

Autonomous car safety group proposes human operator training and oversight [Internet].

Roadshow. CNET; 2019 [cited 2019Nov26].

Available from: https://www.cnet.com/roadshow/news/autonomous-car-safety-group-avsc-human-operator/ - Oliver N, Potočnik K, Calvard T.

To Make Self-Driving Cars Safe, We Also Need Better Roads and Infrastructure [Internet].

Harvard Business Review. 2018 [cited 2019Nov21].

Available from: https://hbr.org/2018/08/to-make-self-driving-cars-safe-we-also-need-better-roads-and-infrastructure - Coren MJ.

Carnage followed the first automobile. How many deaths will we accept from self-driving cars? [Internet].

Quartz. Quartz; 2018 [cited 2019Nov20].

Available from: https://qz.com/1233563/ubers-first-self-driving-car-death-raises-questions-about-acceptable-levels-of-autonomous-vehicle-safety/ - Loomis B.

1900-1930: The years of driving dangerously [Internet].

Detroit News. 2015 [cited 2019Nov21].

Available from: https://www.detroitnews.com/story/news/local/michigan-history/2015/04/26/auto-traffic-history-detroit/26312107/ - National Highway Traffic Safety Administration.

NCSA Publications & Data Requests [Internet]. CrashStats. [cited 2019Nov21].

Available from: https://crashstats.nhtsa.dot.gov/#/ - Zaveri M.

Prosecutors Don't Plan to Charge Uber in Self-Driving Car's Fatal Accident [Internet].

The New York Times. The New York Times; 2019 [cited 2019Nov23].

Available from: https://www.nytimes.com/2019/03/05/technology/uber-self-driving-car-arizona.html - Naughton K.

Waymo’s Autonomous Taxi Service Tops 100,000 Rides [Internet].

Bloomberg.com. Bloomberg; 2019 [cited 2020Feb9].

Available from: https://www.bloomberg.com/news/articles/2019-12-05/waymo-s-autonomous-taxi-service-tops-100-000-rides - Center for Sustainable Systems.

Autonomous Vehicles Factsheet [Internet].

University of Michigan; 2019 [cited 2019Nov25].

Available from: http://css.umich.edu/factsheets/autonomous-vehicles-factsheet - Haydin V.

Everything You Wanted to Know About Types of Operating Systems in Autonomous Vehicles [Internet].

Intellias. Intellias; 2019 [cited 2020Feb14].

Available from: https://www.intellias.com/everything-you-wanted-to-know-about-types-of-operating-systems-in-autonomous-vehicles/ - Laura, Blumenthal, Anderson, M. J, Kalra, Nidhi.

How AV Safety Can Be Defined, Tested, and Measured [Internet].

RAND Corporation. 2018 [cited 2019Nov24].

Available from: https://www.rand.org/pubs/research_reports/RR2662.html - Preliminary Report, Highway, HWY18MH010 Nov 6, 2019.

Available from: https://www.ntsb.gov/investigations/AccidentReports/Reports/HWY18MH010-prelim.pdf - Hawkins AJ.

Uber is at fault for fatal self-driving crash, but it's not alone [Internet].

The Verge; 2019 [cited 2020Feb20].

Available from: https://www.theverge.com/2019/11/19/20972584/uber-fault-self-driving-crash-ntsb-probable-cause - International Communication Association.

Humans less likely to return to an automated advisor once given bad advice [Internet].

Phys.org. 2016 [cited 2019Nov24].

Available from: https://phys.org/news/2016-05-humans-automated-advisor-bad-advice.html#jCp - National Conference of State Legislatures.

Autonomous Vehicles State Bill Tracking Database [Internet].

National Conference of State Legislatures; 2019 [cited 2019Nov23].

Available from: http://www.ncsl.org/research/transportation/autonomous-vehicles-legislative-database.aspx - Hawkins AJ.

US will rewrite safety rules to permit fully driverless cars on public roads [Internet].

The Verge; 2018 [cited 2019Nov25].

Available from: https://www.theverge.com/2018/10/4/17936576/self-driving-car-av-guidelines-3-nhtsa-elaine-chao - United States Department of Transportation.

Comprehensive Management Plan for Automated Vehicle Initiatives [Internet].

United States Department of Transportation; 2018 Jul [cited 2019Nov23].

Available from: https://www.transportation.gov/sites/dot.gov/files/docs/policy-initiatives/automated-vehicles/317351/usdot-comprehensive-management-plan-automated-vehicle-initiatives.pdf - Krok A.

GM, Toyota, Ford and SAE announce new group to set up safety framework for future AVs [Internet].

Roadshow. CNET; 2019 [cited 2019Nov26].

Available from: https://www.cnet.com/roadshow/news/gm-toyota-ford-sae-automated-vehicle-safety-consortium/ - Eliot L.

Key Insights About The U.N.'s New Framework For Globalization Of Self-Driving Cars [Internet].

Forbes. Forbes Magazine; 2019 [cited 2019Nov26].

Available from: https://www.forbes.com/sites/lanceeliot/2019/09/06/key-insights-about-the-uns-new-framework-for-globalization-of-self-driving-cars/#63e9e4f2799b - Aptiv, Audi, Baidu, BMW, Continental, Daimler, et al.

Safety First for Automated Driving [Internet].

Newsroom. Intel; 2019 [cited 2020Feb20].

Available from: https://newsroom.intel.com/wp-content/uploads/sites/11/2019/07/Intel-Safety-First-for-Automated-Driving.pdf - Los Angeles Department of Transportation.

Strategic Implementation Plan [Internet].

2018 Jul [cited 2019Nov24].

Available from: https://static1.squarespace.com/static/57c864609f74567457be9b71/t/5b625117575d1f924b6570ad/1533169956703/LADOT_SIP_06122018.pdf - Temple J.

Alphabet's moonshot chief: Regulating driverless cars demands testing the "smarts" of the systems [Internet].

MIT Technology Review. MIT Technology Review; 2018 [cited 2019Nov24].

Available from: https://www.technologyreview.com/s/610680/alphabets-moonshot-chief-regulating-driverless-cars-demands-testing-the-smarts-of-the/ - Davies A.

Inside the Races That Jump-Started the Self-Driving Car [Internet].

Wired. Conde Nast; 2017 [cited 2020Feb9].

Available from: https://www.wired.com/story/darpa-grand-urban-challenge-self-driving-car/ - Abbass HA, Petraki E, Merrick K, Harvey J, Barlow M.

Trusted Autonomy and Cognitive Cyber Symbiosis: Open Challenges.

Cognitive Computation [Internet]. 2015 [cited 2020Feb9];8(3):385–408.

Available from: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4867784/ - DARPA Public Affairs.

Progressing Towards Assuredly Safer Autonomous Systems [Internet].

Defense Advanced Research Projects Agency; 2020 [cited 2020Feb6].

Available from: https://www.darpa.mil/news-events/2020-01-29 - Chui M, Manyika J, Miremadi M.

Where machines could replace humans--and where they can't (yet) [Internet].

McKinsey & Company. 2016 [cited 2020Feb9].

Available from: https://www.mckinsey.com/business-functions/mckinsey-digital/our-insights/where-machines-could-replace-humans-and-where-they-cant-yet