TWS Evolution: What is next in True Wireless Audio?

A closer look at the recent market trends and audio technologies that enhance the functionalities of TWS devices.

The article focuses on the evolution of TWS (True Wireless Stereo) and some of the technologies that are driving its growth. TWS is a term used to describe any technology that offers a way to listen to high-quality stereophonic sound wirelessly. Bluetooth technology is typically used for wireless transmission of sound in TWS devices. TWS earphones have developed rapidly since their introduction in 2015.

The rise of TWS earbuds

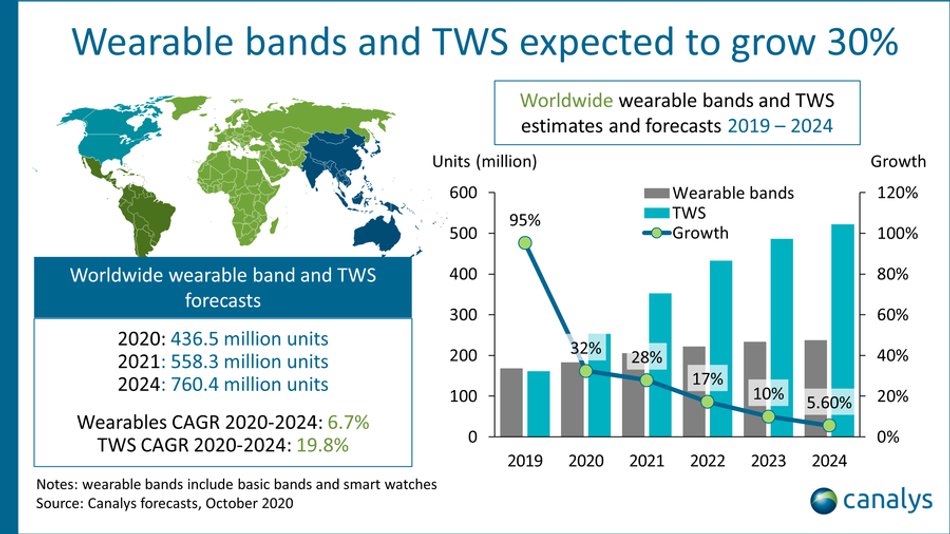

The first TWS earbuds, Bragi Dash, were released in 2015.[2] But it was not until Apple’s announcement of AirPods in 2016 that the technology started picking up pace. In the years that followed, the market for TWS devices grew at a steady rate. In 2020 alone, the number of TWS devices grew by over 30% compared to the year before.[3] Bragi Dash came with a price tag of around $300 when they first launched. Almost a year later, the company released a new model going by the name of ‘The Headphone’ roughly at half the price of its predecessor [4]. Today, brands like JBL, Skullcandy, OnePlus, etc. have come up with entry-level earbuds under $100 that offer solid performance for the price. As the technology gets more affordable, it is expected to penetrate the new and growing markets even further.

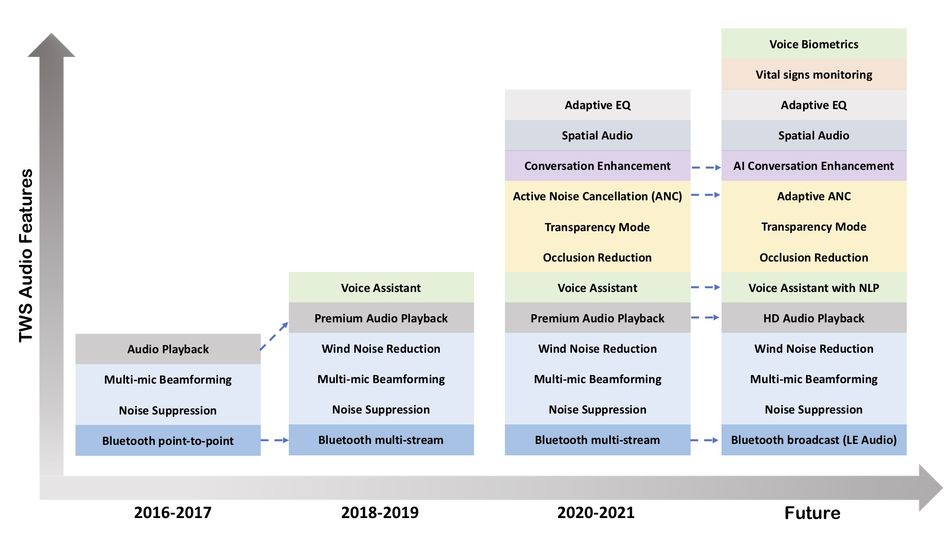

At the high-end, TWS manufacturers will continue to push the boundaries and add new features to differentiate from the rest, and maximize value capture. The potential of TWS has not been fully realized and the innovation in several key technology areas ensures that the growth and evolution of these devices will continue. Figure 2 roughly illustrates the evolution of TWS audio features.

Welcoming high-resolution streaming audio for all

Due to the popularity of lossy codecs such as MP3, AAC, etc, used for streaming audio, most consumers have grown accustomed to listening to lossy, compressed versions of their favorite music tracks. These popular codecs have served a critical purpose in making music widely available. They made the acquisition and distribution of music cheaper and more accessible for more listeners, who could hear music on the go on a wide variety of devices. However, audio enthusiasts have long been waiting for superior, lossless audio quality from the big streaming services providers. There were a slew of announcements this year from Spotify, Amazon, and Apple bringing high-resolution music to their subscribers.

In recent surveys conducted by Qualcomm and Spotify, sound quality is the top priority of the end-users, with 77% of the respondents saying that they are interested in high-resolution music .[5] While features like battery life, comfort, price, etc., all matter to consumers when it comes to making purchase decisions, audio quality is the biggest driver. It is safe to assume that the era of wide-scale consumption of high-resolution streaming music through devices such as TWS is upon us.

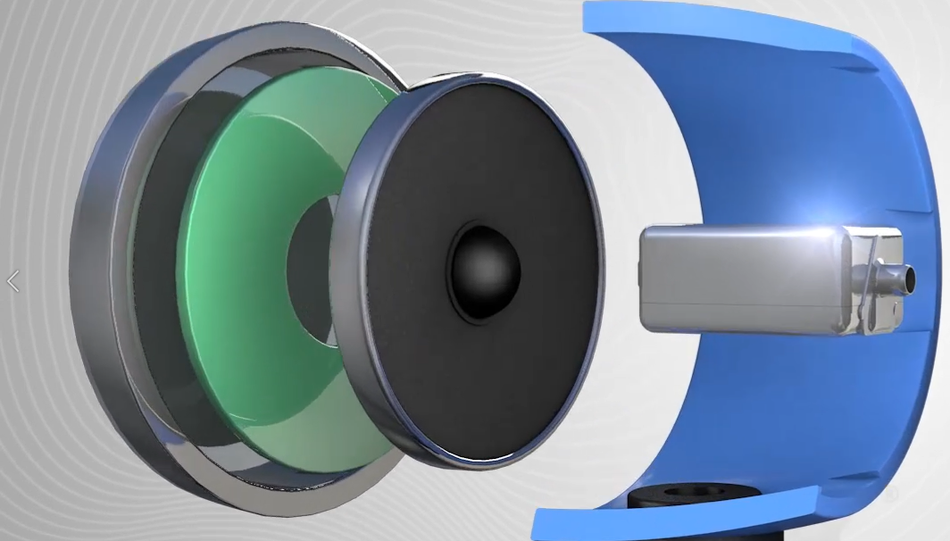

Speaker drivers in earphones deliver the last mile audio in the audio chain and are therefore critical to the delivery of high-resolution music to users’ ears. Balanced Armature (BA) speaker drivers deliver improved fidelity and detail over traditional dynamic drivers in TWS devices. BAs produce a high fidelity sound through the use of a reed that is balanced in the static magnetic field between two magnets within the driver’s outer shell. A stationary coil driven with the audio source magnetizes the reed, causing it and the attached diaphragm to move in step with the music. The sound produced is pure in quality and clarity and is free of unwanted resonances in the audio band. BAs also have a superior high-frequency response making them a good choice for high-resolution music. In addition to delivering high-fidelity sound, BAs also comes in a compact form factor and consume lower power compared to the incumbent dynamic drivers.

Sometimes BAs are combined with dynamic drivers in what is commonly called a hybrid speaker driver. In this configuration, the BA driver functions as a tweeter to deliver on the critical high-end portion of the audio spectrum, while the dynamic driver functions as a woofer. The hybrid combination helps TWS designers deliver products with superior sound quality combined with active noise cancellation (ANC). Hybrid drivers provide a superior value proposition to TWS designers and are starting to become more common in high-to-mid end TWS devices.

The aforementioned trends in streaming audio will continue to push the boundaries of speaker driver technologies, including BAs. BA technology advancements will continue to improve power consumption while delivering high-fidelity sound in compact form-factor designs.

Voice call quality: Narrowing the gap with professional headsets

Consumers are increasingly using their hearable devices such as TWS for voice communications on the move, and often in noisy public environments such as their workplace, shopping malls, and on public transportation. Consumer-grade hearable designs are not particularly optimized for voice pickup like professional headsets with a long boom. In the absence of a boom, it can be challenging to sustain high-quality communication, particularly in a noisy environment. Wind noise, in particular, can severely degrade the Signal-to-Noise Ratio (SNR) with noise levels greater than 50dBSPL. Advanced TWS earbuds have multiple microphones and other sensors such as a voice vibration sensor per earbud to enable high-quality voice capture in all conditions.

A variety of techniques are used to minimize noise and improve the quality of desired signal pickup such as multi-microphone beamforming, voice vibration sensor processing, inner ear microphone signal processing, acoustic echo cancellation, and noise suppression [9]. Improving microphone and voice vibration sensor performance, along with advanced algorithms running on audio edge processors or dedicated cores in Bluetooth Audio SoCs will continue to push state-of-art in voice call performance in TWS as these devices continue to close the gap with professional communication headsets. Power optimization of these audio algorithms on processors is another key topic for TWS designers to improve talk times from the current 3 to 4 hours in active voice call mode.

Enhancing the utility and user experience with AI

Machine learning (ML) algorithms have already started exerting a greater influence on several aspects of our lives, and TWS devices are not immune to their charm. There are already several examples of ML algorithms running on today’s TWS devices. While Active Noise Cancellation (ANC) and transparency mode have become common features in recent years, some TWS devices use AI-driven adaptation to automatically change the noise cancellation or transparency mode settings by analyzing the sound in real-time. The adaptation algorithms account for changes in the ambient environment and even variations in the fit to deliver the best possible performance in all conditions.

AI-enabled approaches are also starting to be used for conversation enhancement in TWS devices. Conversation enhancement refers to the processing of sound in real-time to selectively boost human voices while suppressing ambient noise to enable lucid and immersive person-to-person conversations. Conversation enhancement done well in real-time can provide a superior social experience to TWS users in noisy environments such as pubs, stadiums, public transportation, etc. Chatable recently announced a DNN-based AI algorithm for direct processing of audio for conversation enhancement with no perceptible latency and optimized to run on-chip.

Another example of the use of AI in TWS is for output audio processing. For example, intelligent algorithms are used for upscaling compressed digital music files in real-time for a richer listening experience and even adjusting the EQ to automatically match the users’ hearing profile. For example, the Anker Soundcore Liberty 2 Pro offers a simple listening test to personalize the EQ, and the Sony WF-1000XM4 headphones use AI to dynamically recognize instrumentation, musical genres, and individual elements of each song, such as vocals or interludes to upscale music.

Contextual awareness could create functions such as contextual volume control and noise suppression for TWS devices based on your environment. For example, volume is lowered when the device recognizes someone is saying your name, and noise suppression settings are automatically adjusted when the user is walking through a very loud or very quiet environment. Read more about the potential of contextual awareness in our earlier article.

Running complex real-time AI algorithms on TWS devices require power-efficient processors with sufficient horsepower and memory. With continued improvements in the edge audio processors and algorithms, TWS devices will get smarter.

Spatial Audio for an Immersive Experience

In addition to the above-mentioned example of output audio processing for upscaling digital music, techniques such as spatial audio can deliver an excellent theatre-like experience for users consuming rich media content. Spatial audio refers to a suite of algorithms, including directional audio filtering and head-tracking that provide the feeling of being present in a three-dimensional acoustic scene to a listener. Spatial Audio and other output audio processing techniques will deliver users an immersive experience when enjoying videos, movies, or video games.

Leveraging the Power of Voice

While voice assistants are widely used in devices such as smart speakers, they are still in the early stages of adoption in TWS and other hearable devices. As users get more comfortable with using their favorite voice assistants on the go and with the increasing maturity of the voice assistant ecosystem, the adoption of voice assistants in TWS will take off. Also, with continued improvements in voice processing technology, features such as natural language processing (NLP) and voice biometrics might start making their way into TWS devices in a not-too-distant future.

Emerging TWS Features to Support Personal Health

Recent developments point to a potential entry of hearables such as TWS into the territory held by hearing aids. The Over-the-Counter (OTC) Hearing Aid Act of 2017 instructs the U.S. Food and Drug Administration to create a class of OTC hearing aids for people with mild-to-moderate hearing loss [11]. The passage of this act is likely to accelerate the entry of traditional consumer brands into the OTC hearing aid market. We are already witnessing that new TWS devices, in addition to providing traditional capabilities such as streaming audio playback and voice call, are offering some sort of hearing augmentation to users.

Apple recently enabled some hearing-related features to the Airpods with a software update. This includes features to make quiet voices more audible and tuning the sounds to the user’s hearing needs [12]. However, unlike traditional hearing aids, these devices are currently classed as non-medical devices for users without a hearing impairment diagnosis. It's likely that increasingly sophisticated hearing augmentation features will be designed into consumer-grade TWS devices. Manufacturers are also researching the use of audio processing technology for vital signs monitoring such as heart rate and respiratory rate. Future TWS devices will be capable of measuring and estimating advanced clinical metrics through the use of microphones and other sensors coupled with sophisticated signal processing.

Upgrading to Bluetooth Low Energy (LE) Audio

Bluetooth audio is in the process of getting a major upgrade with the adoption of the LE Audio standard. First charted by the Bluetooth Special Interest Group in 2020, LE Audio has the potential to significantly improve the connectivity experience for TWS and other wearables. As the name implies, reduction in power consumption of the wireless audio connection is a major benefit of LE Audio. Users can simply enjoy their streaming music for longer. Another key benefit to the user is the ability to connect one pair of earbuds to multiple devices, or the ability to broadcast audio from one source device such as a smartphone to multiple earbuds. This feature is sure to unlock new cases for work and entertainment.

Accelerating TWS Innovation

The TWS market continues to grow and evolve fast. Every new generation brings several new features to the market. This rapid pace of evolution puts pressure on all TWS manufacturers to invest significantly into R&D to keep pace with the market. Designing TWS is a complex engineering challenge requiring in-depth knowledge across multiple domains and solid system-level acoustic expertise. While the top OEMs have big in-house engineering teams comprising hardware, software, algorithm engineers, and acoustic expertise, mid-tier and smaller device makers face an uphill task to keep pace with innovation. Ground-up development can be expensive and prone to delays.

To alleviate these pain points, Knowles recently released a first-of-its-kind TWS development platform with advanced features. This platform brings together multiple premium Knowles component technologies and technologies from partners in a fully functional TWS design. TWS manufacturers can leverage this platform to shorten design cycle times and bring products with best-in-class audio and AI-based hearing technologies to the market faster.

Conclusion

Since its inception, TWS has been a game-changer in wireless audio. These devices have taken the market by storm and have been steadily gaining popularity. The article briefly discussed emerging TWS audio features. Continued improvements in technologies like BA drivers, MEMS microphones, audio edge DSPs, connectivity, software, and AI algorithms are all going to contribute to this rapid growth and adoption of new features in TWS devices.

The improvements in the sound quality coupled with advanced AI features will bring quintessential experiences to consumers through their TWS devices. Indeed, wearable smart personal audio devices are undergoing a significant overhaul. We are set to witness some phenomenal breakthroughs in the years to come.

References and further reading:

A history of headphone design [Internet], Available from: https://www.ssense.com/en-us/editorial/culture/a-history-of-headphone-design

The first truly wireless earbuds are here [Internet], Available from: https://www.theverge.com/2015/1/9/7512829/wireless-earbuds-ces-2015-bragi-dash

Canalys Newsroom - Global TWS and Wearables Q4 2020 [Internet], Available from: https://www.canalys.com/newsroom/canalys-tws-and-wearables-q4-2020

Bragi The Headphone [Internet], Available from: https://in.pcmag.com/headphones-1/113112/bragi-the-headphone

Qualcomm The State of Play Report 2020: A global analysis of user behaviors and desires driving consumer audio [Internet], Available from: https://assets.qualcomm.com/VM-Global-2020-CON-StateOfPlay_02-PDF-page.html

True Wireless Earphone Trends - CES 2021 by Knowles Corporation - Vimeo [Internet], Available from: https://vimeo.com/507297531

How edge computing makes voice assistants faster and more powerful, Networking World [Internet], Available from: https://www.networkworld.com/article/3262105/how-edge-computing-makes-voice-assistants-faster-and-more-powerful.html

How audio edge processors enable voice integration in IoT devices - Embedded.com [Internet], Available from: https://www.embedded.com/how-audio-edge-processors-enable-voice-integration-in-iot-devices/

Wearables and Hearables Voice Enhancement, Available from: http://alango.com/voice-enhancement-w.php

https://medcitynews.com/2017/09/hearing-loss-industry-should-focus-on-patient-engagement

https://www.hearingtracker.com/news/airpods-pro-become-hearing-aids-with-ios-14