Integrating IoT into your Business Processes

As the IoT market is growing and the number of connected devices is exploding, an important question arises: what can we do with all the generated data?

This article was first published on

akenza.com

As the IoT market is growing and the number of connected devices is exploding, an important question arises: what can we do with all the generated data?

We are now able to collect data from almost everything, but how can we transform this huge amount of information into valuable insights?

Processes are the backbone of every company’s business: it is, therefore, fundamental to understand how IoT data can be integrated into a workflow to enhance automation and support decision-makers.

A practical example

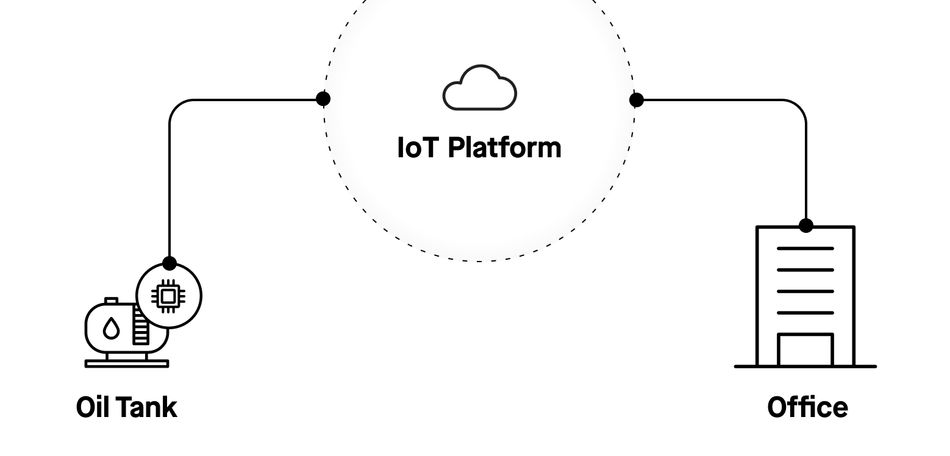

Imagine a simple oil tank level control system. A sensor sends information through an IoT platform to inform about the oil level in the tank, that is used for heating purposes.

The sensor is interfaced with an IoT platform that manages connection and data collection. In the office, it is possible to access all this data by reading it directly from the IoT platform in a dashboard that shows the current status of the system.

This information, automatically retrieved through digitalisation, is already a big step ahead: nobody has to be on-site to control the oil level.

But let’s not forget our initial question: what else can we do with all this data?

Data Analysis

Considering a typical use case where a Business Intelligence (BI) Tool is in use, the very first step that comes after collecting data is analysis. The data analysis process will, in this case, take place in the BI Tool.

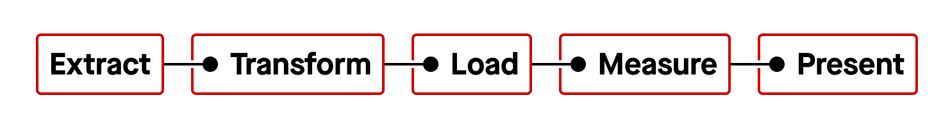

Data analysis is by itself a process that can be divided into subtasks:

The first three steps are well known as ETL process. It consists of the very first data manipulation phase.

Extract

Independently from the source, raw IoT data has to be extracted and imported into the BI Tool. Considering our case, this could mean extracting the last month of data about the oil level measurement. This is what we call a dataset.

Transform

Once the data is imported into the BI Tool, it is necessary to transform it into usable data. It is common to receive data into a JSON format or similar, that is not immediately usable from BI Tools like a table format could be. Depending on the data source and format, a series of transformations are needed. Furthermore, even if we already have data in a tabular form, it is anyway necessary to give a proper name to columns, to give the right format to each value, or to insert formulas to directly enrich the information we can get from this data.

Load

Once the data is ready, what we have to do is load it into the BI Tool so it can be used in the next steps.

Measure

A typical measure can simply be a graphical data representation. It can already be sufficient to analyze the behaviour of the oil level in the last month. Of course, there are many other possibilities; e.g. mathematical functions such as the average consumption per day/week or the linear regression to get an idea about the “speed” of oil consumption in a certain timespan.

Present

The importance of this phase is underestimated. As we present data, it is fundamental to know in advance what is the message we want to communicate with this data. To better understand what this means, imagine correlating the information about oil level with atmospheric temperature. Data can now be seen under a completely new perspective and the information that can be gathered has a higher added value.

Digital Process

We now have a proper data analysis that provides all the information we may need about oil level. It is an important step but, what else can we do with all this data?

The same ETL process used for data analysis, can be implemented to import data into an Enterprise Resource Planning (ERP) system. As ERPs are the core of company processes, it is easy to imagine what kind of possibilities can be enabled by feeding this kind of system with IoT data.

Automatic Feedback Control System

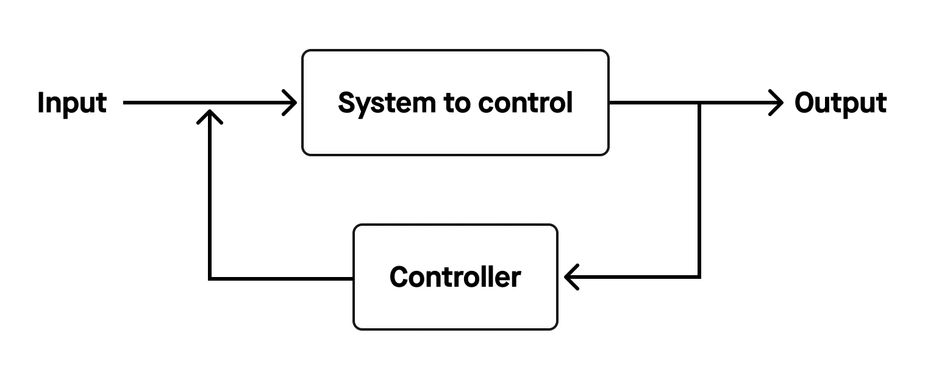

If the ERP knows what the level of oil in the tank is, and it is also where our purchase orders take place, it is easily possible to build what in industrial automation is known as “Feedback Control of Dynamic Systems”, commonly represented with the following block schema:

By constantly monitoring the oil level, the ERP can be triggered to purchase additional oil to maintain a certain optimal oil level: just like when we automatically set the temperature of our oven to cook our cake!

Digital Processes and Data Analysis: an intelligent couple

What if we put digital processes and data analysis together and ask ourselves once again: what can else can we do with all this data?

At this point, possibilities are endless but let's try to imagine a scenario.

The main goal of every process is optimisation and in a digital process, optimisation can be achieved starting from data analysis.

In our scenario, we have already collected data corresponding to 3 years of measurements about oil consumption, but not only. We have data about temperatures, and we can also easily collect data about oil prices in this timespan. What we can now achieve with the correlation of these three different data sources has a value that goes dramatically beyond the simple case of saving the cost of a person to control the oil level on site.

By using Artificial Intelligence, it is possible through deep learning algorithms to predict in advance the oil consumption, based on historical data, as well as estimate what is the best moment to purchase oil at the lowest price to grant the optimal stock on time.

Integrate Akenza with Power BI and Dynamics 365 to leverage the full power of IoT

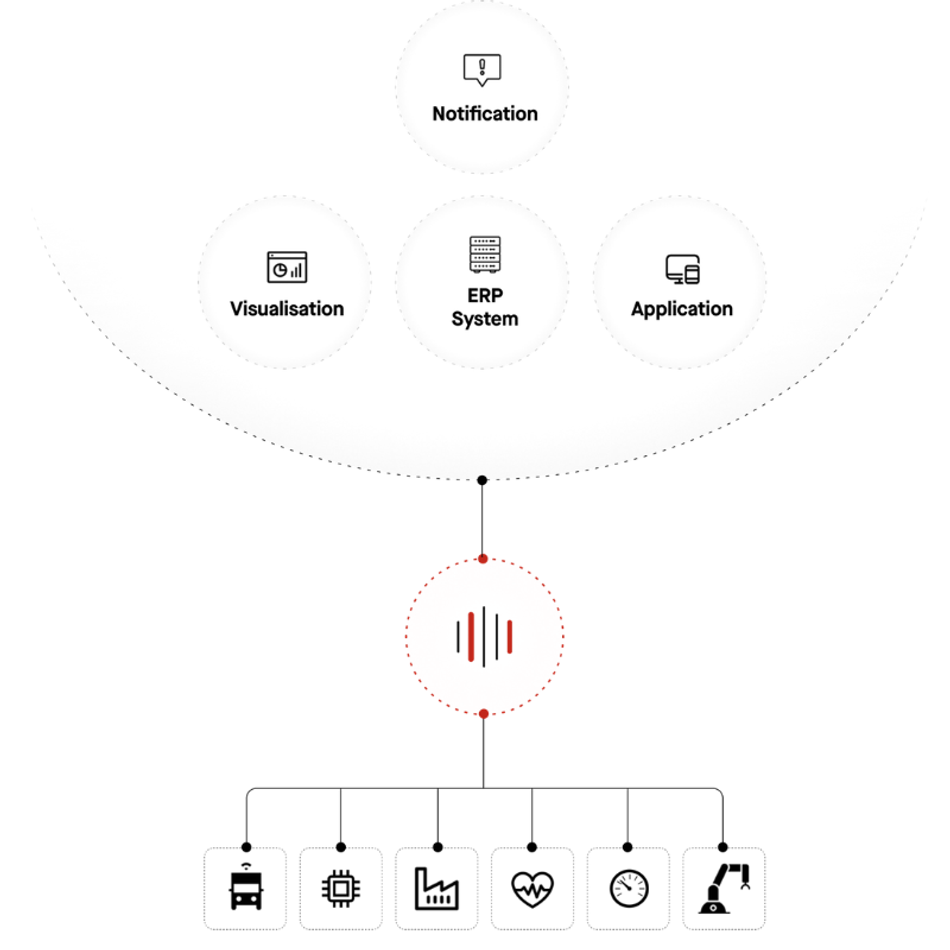

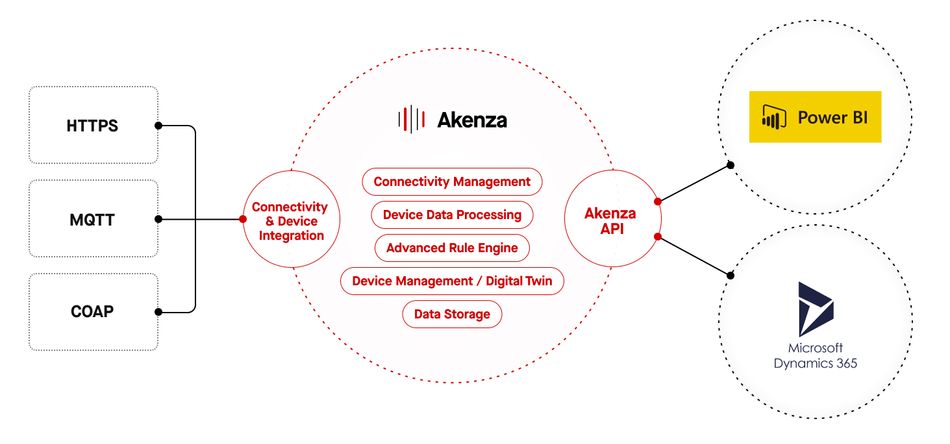

Akenza is the agnostic IoT platform that enables companies to integrate different devices and connections into a unique environment.

No matter what sensor is implemented or what network connection is in use, Akenza builds a bridge between the physical world and the virtual world.

Power BI and Dynamics 365

When it comes to Business Applications, Microsoft Power BI and Dynamics 365 are widely adopted as solutions for Business Intelligence and ERP system.

That is why Akenza natively supports the integration with Microsoft’s products: to allow every company to get more from IoT.

Check out akenza.com to discover how easy it is to implement cases such as our “Oil Tank Scenario” to your business case.