Refining AI's Foundation: Mastering Dataset Curation for Machine Learning

Unlocking AI Potential Through Strategic Dataset Curation: A Comprehensive Guide for Enhancing Machine Learning Models

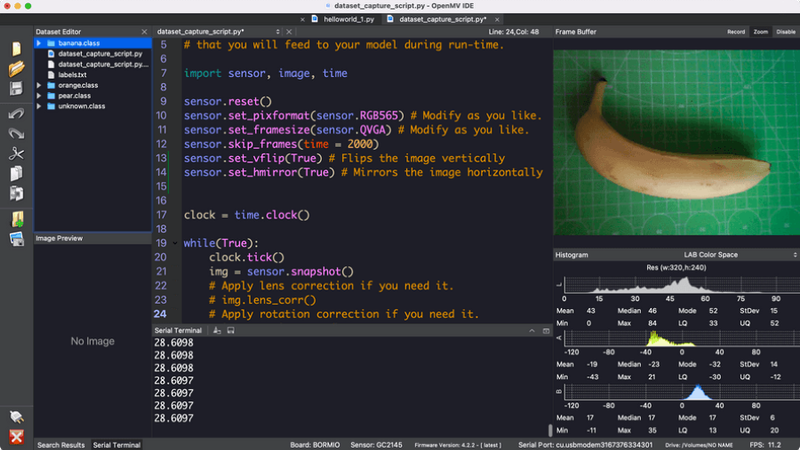

Image credit: Sebastian Romero

In machine learning, the quality and relevance of data play a pivotal role in determining the success of a project. As the saying goes, "Data is the new oil," but just like oil, raw data needs refining to be useful. Refining, organizing, and ensuring data quality is known as dataset curation. This step is paramount, especially in the machine learning domain, where the accuracy and efficiency of models heavily depend on the data they are trained on.

As AI integration across industries accelerates, the significance of data curation becomes even more pronounced. A well-curated dataset ensures optimal model performance and mitigates risks associated with biased or inaccurate predictions; for companies like Edge Impulse, which emphasize the importance of on-device machine learning, the efficiency and reliability of datasets are crucial. This article delves into the best practices in dataset curation, highlighting strategies and considerations that can make or break a machine learning project[1].

Tailoring Best Practices Based on Data Type

While there are general best practices that apply universally to dataset curation, the nuances of different types of data - sensor, audio, and picture/image - necessitate specific considerations. We will explore whether a one-size-fits-all approach is feasible or if tailored strategies are required for each data type.

Standard Practices in Dataset Curation

Dataset curation is a meticulous process that ensures data quality, relevance, and usability. The foundational principles that apply across data types include:

Versioning and Backing up Files: It's crucial to have a system to track different document or software project versions. Platforms like GitHub, Open Science Framework (OSF), and LabArchives@Brown offer versioning for projects. Moreover, backing up data locally and remotely safeguards against data loss due to unforeseen circumstances.[1]

Contextualizing Your Data: Describing data in a standardized manner is essential for comprehension. Data glossaries, dictionaries, README files, and codebooks provide context. This includes column headings, measurement units, value types, and data collection methods.

Choosing Formats for Re-Use: Proprietary data from instruments might require licenses for access. It's beneficial to convert such data to open or commonly used formats, ensuring broader accessibility.

Data-Specific Best Practices

Sensor Data: Time-series data, inherent in sensors, requires careful handling to maintain its sequential integrity. Dealing with noise and ensuring the data's real-world relevance are also paramount.

Audio Data: Sound quality assurance is vital, given the variations in audio formats. It's also essential to consider the diverse acoustic environments from which audio data might originate.

Image Data: Image quality control is crucial, especially when dealing with different resolutions and formats. Diverse visual contexts, such as lighting and perspective, should also be considered.

While there are overarching best practices in dataset curation, the specific nature of the data type in question demands tailored strategies to ensure optimal results.

Addressing Challenges in Dataset Curation

Dataset curation is integral to the success of machine-learning projects. However, the process has its challenges. Addressing these challenges head-on is crucial for ensuring the quality and reliability of the curated datasets.

Imbalanced Datasets

One of the most common challenges in dataset curation is dealing with imbalanced datasets. An imbalanced dataset is one where certain data classes are overrepresented while others are underrepresented. This can lead to biased models that perform well on the overrepresented classes but poorly on the underrepresented ones.

For instance, in a machine learning project aimed at recognizing different sounds, the model might need help recognizing the latter if the dataset has thousands of urban sounds but only a handful of rural sounds. Addressing this requires techniques like oversampling the minority class, undersampling the majority class, or using synthetic data generation methods.

Data Privacy and Ethics

Another significant challenge is ensuring data privacy and adhering to ethical standards during dataset curation. With the increasing awareness and regulations around data privacy, such as GDPR, it's imperative to handle data carefully, ensuring that personal and sensitive information is anonymized or removed.

A recent article from Nature Computational Science highlighted the challenges faced during data curation related to pandemic data.[3] The article emphasized the importance of global data curation and standardization efforts to ensure rapid data integration and dissemination. It also highlighted the ethical, legal, and privacy issues hindering open data sharing. Such challenges are not exclusive to pandemic data but are relevant to any data curation effort.

Moreover, ethical considerations extend beyond privacy. For instance, ensuring that the data doesn't reinforce existing biases or lead to discrimination against specific populations based on gender, age, or location.

Tips and Tricks for Effective Dataset Curation

Dataset curation is a crucial step in the machine learning pipeline. Properly curated datasets ensure the accuracy of models and streamline the training process. Here are some actionable tips to enhance the dataset curation process:

1. Plan for Accuracy at the Source: It's more efficient to validate data at its origin than to assess its accuracy later. For instance, asking users to validate their data or using sampling and auditing can help estimate accuracy levels.

2. Annotate and Label: Annotating and labeling datasets during curation can simplify data management and troubleshooting. This can include adding timestamps or event locations. However, ensure that metadata is accurate to prevent inaccuracies during data transformation.

3. Prioritize Security and Privacy: Large datasets can be vulnerable to breaches. Security practices like encryption, de-identification, and a robust data governance model can mitigate risks. Edge Impulse's emphasis on on-device processing also underscores the importance of data privacy.

4. Anticipate Future Needs: Begin the data curation process with a clear vision of the end goal. Track how analytics and machine learning applications utilize datasets and refine the data aggregation process accordingly.

5. Balance Data Governance with Agility: Striking a balance between data governance and business agility is crucial. Centralized platforms can help users identify relevant data and processes for their analytics or machine learning projects.

6. Identify Business Needs: Ensure the curated data aligns with business objectives. Engage business stakeholders to understand their requirements early and avoid curating irrelevant data.

Data-Specific Best Practices

1. Sensor Data: Consider the data collection frequency and noise potential for time-series data. Ensure that the data reflects real-world scenarios and is relevant to the problem.

2. Audio Data: Ensure sound quality by considering factors like background noise and audio format compatibility. Diverse acoustic environments can introduce variability, so curate data that captures this diversity.

3. Image Data: Image quality is paramount. Consider factors like resolution, format, and the context in which images are captured. Diverse visual contexts can provide a more comprehensive dataset for training models.

By adhering to these best practices, organizations can curate datasets that are not only high-quality but also tailored to their specific needs, ensuring the success of their machine-learning projects.

Leveraging Edge Impulse for Dataset Curation

In machine learning, dataset curation is a pivotal step that can make or break the performance of a model. Edge Impulse, a leading development platform for machine learning on edge devices, offers tools that streamline and enhance the dataset curation process.

Edge Impulse's Data Collection Tools

Edge Impulse understands the importance of diverse and high-quality data. The platform allows developers to gather data from many sources, be it their sensor hardware, public datasets, or data generated through simulations or synthetic data generation.[5] This flexibility ensures the dataset is comprehensive and relevant to the specific application.

Data Visualization and Analysis with Edge Impulse

Once data is collected, understanding and analyzing it becomes crucial. Edge Impulse provides advanced data analysis tools that help quickly detect data quality issues, such as visual representation of outliers in a dataset, or mislabeled data. Whether you're working with sensor data, vision, or audio, the platform offers tailored tools that ensure your dataset is of the highest quality, ready to be fed into a machine-learning model.[5]

Conclusion

Dataset curation is the backbone of any successful machine-learning project. The quality and relevance of the data directly influence the model's performance. With platforms like Edge Impulse, the process of dataset curation becomes more streamlined and efficient. By leveraging the right tools and adhering to best practices, one can ensure that their machine learning models are built on a solid foundation of high-quality data.